This Week I Voted

I voted this week. Twice, as it happens (vote early, vote often). This post is about my vote for Debian’s General Resolution: Init systems and systemd. You probably want to skip it, but I thought I’d write it up anyway.

[ 1 ] Choice 5: H: Support portability, without blocking progress

[ 2 ] Choice 4: D: Support non-systemd systems, without blocking progress

[ 3 ] Choice 7: G: Support portability and multiple implementations

[ 4 ] Choice 3: A: Support for multiple init systems is Important

[ 5 ] Choice 2: B: Systemd but we support exploring alternatives

[ 6 ] Choice 1: F: Focus on systemd

[ 7 ] Choice 6: E: Support for multiple init systems is Required

[ 8 ] Choice 8: Further Discussion

Firstly, I’ve re-ordered the ballot in the order I ranked things in. I find the mix of numbers and letters that don’t match up confusing, and I think the ordering on the ballot indicates the bias of whoever did the ordering. I don’t think that’s intended to be anything other than helpful, but I’d have kept the numbers and letters matching in the expected order.

I made use of Ian Jackson’s voting guide (and should disclose that he and I have had conversations about this matter where he kindly took time to explain to me his position and rationale). However I’m more pro-systemd than he is, and also lazier, so hopefully this post is useful in some fashion rather than a simple rehash of anyone else’s logic.

I ranked Further Discussion last. I want this to go away. I feel it’s still sucking too much of the project’s time.

E was easy to rank as second last. While I want to support people who want to run non-systemd setups I don’t want to force us as a project to have to shoehorn that support in where it’s not easily done.

I put F third last. While I welcome the improvements brought by systemd I’m wary of buying into any ecosystem completely, and it has a lot of tentacles which will make any future move much more difficult if we buy in wholesale (and make life unnecessarily difficult for people who want to avoid systemd, and I’ve no desire to do that).

On the flip side I think those who want to avoid systemd should be able to do so within Debian. I don’t buy the argument that you can just fork and drop systemd there, it’s an invasive change that makes it much, much harder to produce a derivative system. So it’s one of those things we should care about as a project. (If you hate systemd so much you don’t want even its libraries on your system I can’t help you.)

I debated over my ordering for H and D. I am in favour of portability principles, and I’m happy to make a statement that if someone is prepared to do the work of sorting out non-systemd support for a package then as a project we should take that. I read that as it’s not my responsibility as a maintainer to do these things (though obviously if I can easily do so I will), but that I shouldn’t get in the way of someone else doing so. As someone who has built things on top of Debian I subscribe to the idea that it should be suitable glue for such things (as well as something I can run directly on my own machines), so I favoured H.

That leaves A and G. I deferred to Ian here; I’d rather systemd wasn’t the de facto result despite best intentions, which results in placing G first of the two.

For balance you might want to read the posts by Bernd Zeimetz, Jonathan Dowland, Gunnar Wolf, Lucas Nussbaum, Philipp Kern, Sam Hartman and Wouter Verhelst.

Native IPv6: One Month Later

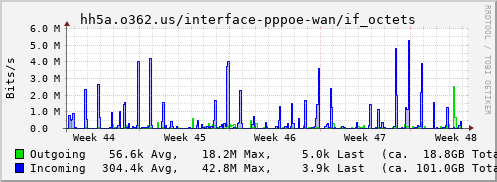

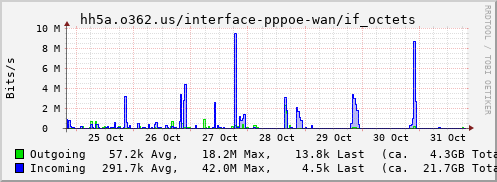

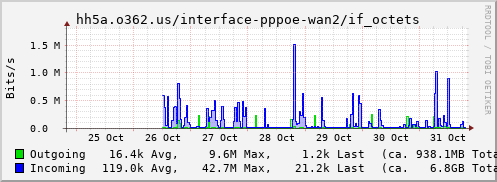

I wrote a month ago about getting native IPv6 over fibre. Given that this week RIPE announced they’d run out of IPv4 allocations and today my old connection was turned off, it seems an appropriate point to look at my v4 vs v6 usage over a month (as a reminder, I used my new FTTP connection for v6 only over the past month, with v4 still going over my old FTTC connection). I’m actually surprised at the outcome:

That’s telling me I received 1.8 times as much traffic via IPv6 over the past month as I did over IPv4. Even discounting my backups (the 2 v6 peaks), which could account for up to half of that, that means IPv6 and IPv4 are about equal. That’s with all internal networks doing both and no attempt at traffic shaping between them - everything’s free to pick their preference.

I don’t have a breakdown of what went where, but if you run a network and you’re not v6 enabled, why not? From my usage at least you’re getting towards being in the minority.

Getting native IPv6 over Fibre To The Home

Last week I changed ISP. My primary reason was to get native IPv6 at home. As a side effect I’ve lowered my monthly costs and moved from VDSL2 (Fibre To The Cabinet/FTTC) to GPON (Fibre To The Premises/FTTP). But trust me when I say the thing that prompted the move was the desire for native v6.

First, some words of thanks to my previous ISP. I was with MCL Services who have been absolutely fantastic; no issues with service, and responsive support when I had queries. The problem was that they’re a Gamma reseller, and Gamma are showing no signs of enabling v6 (I had Daniel poke them several times, because even a rough ETA would have kept me hanging around to see if they made good on it).

What caused me to even start looking elsewhere was BT mailshotting me about the fact I’m in a Fibre First area and FTTP was thus now available to me. They dangled some pretty attractive pricing in front of me (£50/month for 300M/50M). BT have enabled v6 across their consumer network (and should be applauded for that), but unfortunately don’t provide a static v6 range as part of that. One of the things I wanted was to give my internal hosts static IPs. A dynamic range doesn’t allow for that. So BT was a no.

Conveniently enough there’d been a thread on the debian-uk mailing list about server-friendly ISPs. I’m not looking to run services on the end of my broadband line - as long as I can SSH in and provided a basic HTTPS endpoint for some remote services to call in that’s perfect - but a mention of Aquiss came up as a competent option. I was already aware of them as I know several existing users, and I knew they use Entanet to provide pieces of their service. Enta are long time IPv6 supporters, so I took a look. And discovered that I could move to an equivalent service to what I was on, except over fibre and for cheaper (because there was no need to pay for phone line rental I wasn’t using). No brainer.

So last Thursday an engineer from Openreach turned up. Like last time the job was bigger than expected (I think the Openreach database has just failed to record the fact the access isn’t where they think it is). Also like last time they didn’t just go away, but instead arranged for another engineer to turn up to help with the two-man bit of the job, and got it all done that day. The only worrying bit was when my existing line went down - FTTP is a brand new install rather than a migration - but that turned out to be because they run a new hybrid cable from the pole with both fibre and copper on it. Once the new cable was spliced back in the existing connection came back fine. Total outage was just over an hour - something to be aware of if you’re trying to work from home during the install like I was. Thankfully I have enough spare data on my Three contract that I was able to keep working.

A picture of the ONT as installed is above; it’s a new style one with no battery backup and a single phone port + ethernet port. I had it placed beside my existing master socket, because that’s where everything is currently situated, but I was given the option to have it placed elsewhere. There’s a wall-wart for power, so you do need a free socket. The ethernet port provides a GigE connection (even though my line is currently only configured for 80M/20M), and it does PPPoE - no VLANs or anything required, though you do need the username/password from your ISP for CHAP authentication, which looks exactly like a normal ADSL username/password.

I rejigged my OpenWRT setup so I had a spare port on the HomeHub 5A, then configured up a “wan2” interface with the PPPoE login details and IPv6 enabled:

config interface 'wan2'

option ifname 'eth0.100'

option proto 'pppoe'

option username 'noodles@fttp'

option password 'gimmev6fttp'

option ipv6 '1'

option ip6prefix '2001:xxxx:yyyy:zz00::/56'

option defaultroute 0

(I’d put the spare port into VLAN 100, hence eth0.100)

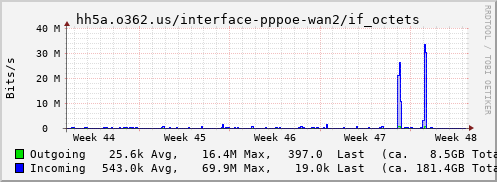

For the moment I’m using the old line for IPv4 (I have a 30 day notice on it) and the new line for just IPv6, hence setting defaultroute to 0. I actually end up with more IPv6 traffic than I’d expect (though there’d be more if my TV did v6 for Netflix):

I had to do a bunch of internal reconfiguration as well; I’d previously used a Hurricane Electric tunnel, but only enabled it for certain hosts (I couldn’t saturate my connection over the tunnel). Now I have native IPv6 I wanted everything configured up properly, with internal DNS properly sorted so internal traffic tried to use v6 where possible. That means my MQTT broker is doing v6 (though unfortunately not for my ESP8266 devices), and I’m accessing my Home Assistant instance over v6 (needed server_host: ::0 in the http configuration section to make it listen on v6, and stops it listening on v4. Not a problem for me as I front it with an SSL proxy that can do both). Equally SSH to all my internal hosts and containers is now over v6.

Of course, ultimately there’s no real external visible indication of the fact things are using IPv6, even for external bits. Which is exactly as it should be.

Debian Buster / OpenWRT 18.06.4 upgrade notes

Yesterday was mostly a day of doing upgrades (as well as the usual Saturday things like a parkrun). First on the list was finally upgrading my last major machine from Stretch to Buster. I’d done everything else and wasn’t expecting any major issues, but it runs more things so there’s more generally the opportunity for problems. Some notes I took along the way:

apt upgradeupdated collectd but due to the loose dependencies (the collectd package has a lot of plugins and chooses not to depend on anything other than what the core needs)libsensors5was not pulled in so the daemon restart failed. This made apt/dpkg unhappy until I manually pulled inlibsensors5(replacinglibsensors4).- My custom fail2ban rule to drop spammers trying to register for wiki accounts too frequently needed the addition of a

datepatternentry to work properly. - The new version of

python-flaskext.wtfcaused deprecation warnings from a custom site I run, fixed by moving fromFormtoFlaskForm. I still have a problem with aTypeError: __init__() takes at most 2 arguments (3 given)error to track down. - ejabberd is still a pain. This time the change of the erlang node name caused issues with it starting up. There was a note in

NEWS.Debianbut the failure still wasn’t obvious at first. - For most of the config files that didn’t update automatically I just did a manual

vimdiffand pulled in the updated comments; the changes were things I wanted to keep like custom SSL certificate configuration or similar. - PostgreSQL wasn’t as smooth as last time. A

pg_upgradecluster 9.6 mainmostly did the right thing (other than taking ages to migrate the SpamAssassin Bayes database), but left 9.6 still running rather than 11. - I’m hitting #924178 in my duplicity backups - they’re still working ok, but might be time to finally try restic

All in all it went smoothly enough; the combination of a lot of packages and the PostgreSQL migration caused most of the time. Perhaps it’s time to look at something with SSDs rather than spinning rust (definitely something to check out when I’m looking for alternative hosts).

The other major-ish upgrade was taking my house router (a repurposed BT HomeHub 5A) from OpenWRT 18.06.2 to 18.06.4. Not as big as the Debian upgrade, but with the potential to leave me with non-working broadband if it went wrong1. The CLI sysupgrade approach worked fine (as it has in the past), helped by the fact I’ve added my MQTT and SSL configuration files to /etc/sysupgrade.conf so they get backed up and restored. OpenWRT does a full reflash for an upgrade given the tight flash constraints, so other than the config files that get backed up you need to restore anything extra. This includes non-default packages that were installed, so I end up with something like

opkg update

opkg install mosquitto-ssl 6in4 ip-tiny picocom kmod-usb-serial-pl2303

And then I have a custom compile of the collectd-mod-network package to enable encryption, and my mqtt-arp tool to watch for house occupants:

opkg install collectd collectd-mod-cpu collectd-mod-interface \

collectd-mod-load collectd-mod-memory collectd-mod-sensors \

/tmp/collectd-mod-network_5.8.1-1_mips_24kc.ipk

opkg install /tmp/mqtt-arp_1.0-1_mips_24kc.ipk

One thing that got me was the fact that installing the 6to4 package didn’t give me working v6, I had to restart the router for it to even configure up its v6 interfaces. Not a problem, just didn’t notice for a few hours2.

While I was on a roll I upgraded the kernel on my house server to the latest stable release, and Home Assistant to 0.100.2. As expected neither had any hiccups.

Ada Lovelace Day: 5 Amazing Women in Tech

It’s Ada Lovelace day and I’ve been lax in previous years about celebrating some of the talented women in technology I know or follow on the interwebs. So, to make up for it, here are 5 amazing technologists.

I was initially aware of Allison through her work on Perl, was vaguely aware of the fact she was working on Ubunutu, briefly overlapped with her at HPE (and thought it was impressive HP were hiring such high calibre of Free Software folk) when she was working on OpenStack, and have had the pleasure of meeting her in person due to the fact we both work on Debian. In the continuing theme of being able to do all things tech she’s currently studying a PhD at Cambridge (the real one), and has already written a fascinating paper about about the security misconceptions around virtual machines and containers. She’s also been doing things with home automation, properly, with local speech recognition rather than relying on any external assistant service (I will, eventually, find the time to follow her advice and try this out for myself).

Graphics are one of the many things I just can’t do. I’m not artistic and I’m in awe of anyone who is capable of wrangling bits to make computers do graphical magic. People who can reverse engineer graphics hardware that would otherwise only be supported by icky binary blobs impress me even more. Alyssa is such a person, working on the Panfrost driver for ARM’s Mali Midgard + Bifrost GPUs. The lack of a Free driver stack for this hardware is a real problem for the ARM ecosystem and she has been tirelessly working to bring this to many ARM based platforms. I was delighted when I saw one of my favourite Free Software consultancies, Collabora, had given her an internship over the summer. (Selfishly I’m hoping it means the Gemini PDA will eventually be able to run an upstream kernel with accelerated graphics.)

The first time I saw Angie talk it was about the user experience of Virtual Reality, and how it had an entirely different set of guidelines to conventional user interfaces. In particular the premise of not trying to shock or surprise the user while they’re in what can be a very immersive environment. Obvious once someone explains it to you! Turns out she was also involved in the early days of custom PC builds and internet cafes in Northern Ireland, and has interesting stories to tell. These days she’s concentrating on cyber security - I’ve her to thank for convincing me to persevere with Ghidra - having looked at Bluetooth security as part of her Masters. She’s also deeply aware of the implications of the GDPR and has done some interesting work on thinking about how it affects the computer gaming industry - both from the perspective of the author, and the player.

I’m not particularly fond of modern web design. That’s unfair of me, but web designers seem happy to load megabytes of Javascript from all over the internet just to display the most basic of holding pages. Indeed it seems that such things now require all the includes rather than being simply a matter of HTML, CSS and some graphics, all from the same server. Claire talked at Women Techmakers Belfast about moving away from all of this bloat and back to a minimalistic approach with improved performance, responsiveness and usability, without sacrificing functionality or presentation. She said all the things I want to say to web designers, but from a position of authority, being a front end developer as her day job. It’s great to see someone passionate about front-end development who wants to do things the right way, and talks about it in a way that even people without direct experience of the technologies involved (like me) can understand and appreciate.

There aren’t enough people out there who understand law and technology well. Karen is one of the few I’ve encountered who do, and not only that, but really, really gets Free software and the impact of the four freedoms on users in a way many pure technologists do not. She’s had a successful legal career that’s transitioned into being the general counsel for the Software Freedom Law Center, been the executive director of GNOME and is now the executive director of the Software Freedom Conservancy. As someone who likes to think he knows a little bit about law and technology I found Karen’s wealth of knowledge and eloquence slightly intimidating the first time I saw her speak (I think at some event in San Francisco), but I’ve subsequently (gratefully) discovered she has an incredible amount of patience (and ability) when trying to explain the nuances of free software legal issues.

subscribe via RSS