Upgrading from a CC2531 to a CC2538 Zigbee coordinator

Previously I setup a CC2531 as a Zigbee coordinator for my home automation. This has turned out to be a good move, with the 4 gang wireless switch being particularly useful. However the range of the CC2531 is fairly poor; it has a simple PCB antenna. It’s also a very basic device. I set about trying to improve the range and scalability and settled upon a CC2538 + CC2592 device, which feature an MMCX antenna connector. This device also has the advantage that it’s ARM based, which I’m hopeful means I might be able to build some firmware myself using a standard GCC toolchain.

For now I fetched the JetHome firmware from https://github.com/jethome-ru/zigbee-firmware/tree/master/ti/coordinator/cc2538_cc2592 (JH_2538_2592_ZNP_UART_20211222.hex) - while it’s possible to do USB directly with the CC2538 my board doesn’t have those bits so going the external USB UART route is easier.

The device had some existing firmware on it, so I needed to erase this to force a drop into the boot loader. That means soldering up the JTAG pins and hooking it up to my Bus Pirate for OpenOCD goodness.

OpenOCD config

source [find interface/buspirate.cfg]

buspirate_port /dev/ttyUSB1

buspirate_mode normal

buspirate_vreg 1

buspirate_pullup 0

transport select jtag

source [find target/cc2538.cfg]

Steps to erase

$ telnet localhost 4444

Trying ::1...

Trying 127.0.0.1...

Connected to localhost.

Escape character is '^]'.

Open On-Chip Debugger

> mww 0x400D300C 0x7F800

> mww 0x400D3008 0x0205

> shutdown

shutdown command invoked

Connection closed by foreign host.

At that point I can switch to the UART connection (on PA0 + PA1) and flash using cc2538-bsl:

$ git clone https://github.com/JelmerT/cc2538-bsl.git

$ cc2538-bsl/cc2538-bsl.py -p /dev/ttyUSB1 -e -w -v ~/JH_2538_2592_ZNP_UART_20211222.hex

Opening port /dev/ttyUSB1, baud 500000

Reading data from /home/noodles/JH_2538_2592_ZNP_UART_20211222.hex

Firmware file: Intel Hex

Connecting to target...

CC2538 PG2.0: 512KB Flash, 32KB SRAM, CCFG at 0x0027FFD4

Primary IEEE Address: 00:12:4B:00:22:22:22:22

Performing mass erase

Erasing 524288 bytes starting at address 0x00200000

Erase done

Writing 524256 bytes starting at address 0x00200000

Write 232 bytes at 0x0027FEF88

Write done

Verifying by comparing CRC32 calculations.

Verified (match: 0x74f2b0a1)

I then wanted to migrate from the old device to the new without having to repair everything. So I shut down Home Assistant and backed up the CC2531 network information using zigpy-znp (which is already installed for Home Assistant):

python3 -m zigpy_znp.tools.network_backup /dev/zigbee > cc2531-network.json

I copied the backup to cc2538-network.json and modified the coordinator_ieee to be the new device’s MAC address (rather than end up with 2 devices claiming the same MAC if/when I reuse the CC2531) and did:

python3 -m zigpy_znp.tools.network_restore --input cc2538-network.json /dev/ttyUSB1

The old CC2531 needed unplugged first, otherwise I got an RuntimeError: Network formation refused, RF environment is likely too noisy. Temporarily unscrew the antenna or shield the coordinator with metal until a network is formed. error.

After that I updated my udev rules to map the CC2538 to /dev/zigbee and restarted Home Assistant. To my surprise it came up and detected the existing devices without any extra effort on my part. However that resulted in 2 coordinators being shown in the visualisation, with the old one turning up as unk_manufacturer. Fixing that involved editing /etc/homeassistant/.storage/core.device_registry and removing the entry which had the old MAC address, removing the device entry in /etc/homeassistant/.storage/zha.storage for the old MAC and then finally firing up sqlite to modify the Zigbee database:

$ sqlite3 /etc/homeassistant/zigbee.db

SQLite version 3.34.1 2021-01-20 14:10:07

Enter ".help" for usage hints.

sqlite> DELETE FROM devices_v6 WHERE ieee = '00:12:4b:00:11:11:11:11';

sqlite> DELETE FROM endpoints_v6 WHERE ieee = '00:12:4b:00:11:11:11:11';

sqlite> DELETE FROM in_clusters_v6 WHERE ieee = '00:12:4b:00:11:11:11:11';

sqlite> DELETE FROM neighbors_v6 WHERE ieee = '00:12:4b:00:11:11:11:11' OR device_ieee = '00:12:4b:00:11:11:11:11';

sqlite> DELETE FROM node_descriptors_v6 WHERE ieee = '00:12:4b:00:11:11:11:11';

sqlite> DELETE FROM out_clusters_v6 WHERE ieee = '00:12:4b:00:11:11:11:11';

sqlite> .quit

So far it all seems a bit happier than with the CC2531; I’ve been able to pair a light bulb that was previously detected but would not integrate, which suggests the range is improved.

(This post another in the set of “things I should write down so I can just grep my own website when I forget what I did to do foo”.)

Building a desktop to improve my work/life balance

It’s been over 20 months since the first COVID lockdown kicked in here in Northern Ireland and I started working from home. Even when the strict lockdown was lifted the advice here has continued to be “If you can work from home you should work from home”. I’ve been into the office here and there (for new starts given you need to hand over a laptop and sort out some login details it’s generally easier to do so in person, and I’ve had a couple of whiteboard sessions that needed the high bandwidth face to face communication), but day to day is all from home.

Early on I commented that work had taken over my study. This has largely continued to be true. I set my work laptop on the stand on a Monday morning and it sits there until Friday evening, when it gets switched for the personal laptop. I have a lovely LG 34UM88 21:9 Ultrawide monitor, and my laptops are small and light so I much prefer to use them docked. Also my general working pattern is to have a lot of external connections up and running (build machine, test devices, log host) which means a suspend/resume cycle disrupts things. So I like to minimise moving things about.

I spent a little bit of time trying to find a dual laptop stand so I could have both machines setup and switch between them easily, but I didn’t find anything that didn’t seem to be geared up for DJs with a mixer + laptop combo taking up quite a bit of desk space rather than stacking laptops vertically. Eventually I realised that the right move was probably a desktop machine.

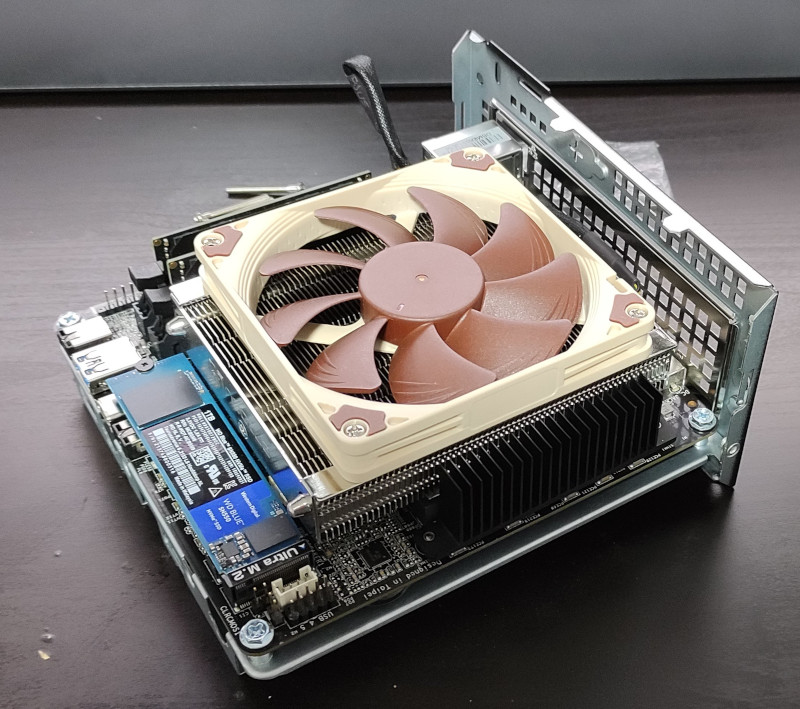

Now, I haven’t had a desktop machine since before I moved to the US, realising at the time that having everything on my laptop was much more convenient. I decided I didn’t want something too big and noisy. Cheap GPUs seem hard to get hold of these days - I’m not a gamer so all I need is something that can drive a ~ 4K monitor reliably enough. Looking around the AMD Ryzen 7 5700G seemed to be a decent CPU with one of the better integrated GPUs. I spent some time looking for a reasonable Mini-ITX case + motherboard and then I happened upon the ASRock DeskMini X300. This turns out to be perfect; I’ve no need for a PCIe slot or anything more than an m.2 SSD. I paired it with a Noctua NH-L9a-AM4 heatsink + fan (same as I use in the house server), 32GB DDR4 and a 1TB WD SN550 NVMe SSD. Total cost just under £650 inc VAT + delivery (and that’s a story for another post).

A desktop solves the problem of fitting both machines on the desk at once, but there’s still the question of smoothly switching between them. I read Evgeni Golov’s article on a simple KVM switch for €30. My monitor has multiple inputs, so that’s sorted. I did have a cheap USB2 switch (all I need for the keyboard/trackball) but it turned out to be pretty unreliable at the host detecting the USB change. I bought a UGREEN USB 3.0 Sharing Switch Box instead and it’s turned out to be pretty reliable. The problem is that the LG 32UM88 turns out to have a poor DDC implementation, so while I can flip the keyboard easily with the UGREEN box I also have to manually select the monitor input. Which is a bit annoying, but not terrible.

The important question is whether this has helped. I built all this at the end of October, so I’ve had a month to play with it. Turns out I should have done it at some point last year. At the end of the day instead of either sitting “at work” for a bit longer, or completely avoiding the study, I’m able to lock the work machine and flick to my personal setup. Even sitting in the same seat that “disconnect”, and the knowledge I won’t see work Slack messages or emails come in and feeling I should respond, really helps. It also means I have access to my personal setup during the week without incurring a hit at the start of the working day when I have to set things up again. So it’s much easier to just dip in to some personal tech stuff in the evening than it was previously. Also from the point of view I don’t need to setup the personal config, I can pick up where I left off. All of which is really nice.

It’s also got me thinking about other minor improvements I should make to my home working environment to try and improve things. One obvious thing now the winter is here again is to improve my lighting; I have a good overhead LED panel but it’s terribly positioned for video calls, being just behind me. So I think I’m looking some sort of strip light I can have behind the large monitor to give a decent degree of backlight (possibly bouncing off the white wall). Lots of cheap options I’m not convinced about, and I’ve had a few ridiculously priced options from photographer friends; suggestions welcome.

Adding Zigbee to my home automation

My home automation setup has been fairly static recently; it does what we need and generally works fine. One area I think could be better is controlling it; we have access Home Assistant on our phones, and the Alexa downstairs can control things, but there are no smart assistants upstairs and sometimes it would be nice to just push a button to turn on the light rather than having to get my phone out. Thanks to the fact the UK generally doesn’t have neutral wire in wall switches that means looking at something battery powered. Which means wifi based devices are a poor choice, and it’s necessary to look at something lower power like Zigbee or Z-Wave.

Zigbee seems like the better choice; it’s a more open standard and there are generally more devices easily available from what I’ve seen (e.g. Philips Hue and IKEA TRÅDFRI). So I bought a couple of Xiaomi Mi Smart Home Wireless Switches, and a CC2530 module and then ignored it for the best part of a year. Finally I got around to flashing the Z-Stack firmware that Koen Kanters kindly provides. (Insert rant about hardware manufacturers that require pay-for tool chains. The CC2530 is even worse because it’s 8051 based, so SDCC should be able to compile for it, but the TI Zigbee libraries are only available in a format suitable for IAR’s embedded workbench.)

Flashing the CC2530 is a bit of faff. I ended up using the CCLib fork by Stephan Hadinger which supports the ESP8266. The nice thing about the CC2530 module is it has 2.54mm pitch pins so nice and easy to jumper up. It then needs a USB/serial dongle to connect it up to a suitable machine, where I ran Zigbee2MQTT. This scares me a bit, because it’s a bunch of node.js pulling in a chunk of stuff off npm. On the flip side, it Just Works and I was able to pair the Xiaomi button with the device and see MQTT messages that I could then use with Home Assistant. So of course I tore down that setup and went and ordered a CC2531 (the variant with USB as part of the chip). The idea here was my test setup was upstairs with my laptop, and I wanted something hooked up in a more permanent fashion.

Once the CC2531 arrived I got distracted writing support for the Desk Viking to support CCLib (and modified it a bit for Python3 and some speed ups). I flashed the dongle up with the Z-Stack Home 1.2 (default) firmware, and plugged it into the house server. At this point I more closely investigated what Home Assistant had to offer in terms of Zigbee integration. It turns out the ZHA integration has support for the ZNP protocol that the TI devices speak (I’m reasonably sure it didn’t when I first looked some time ago), so that seemed like a better option than adding the MQTT layer in the middle.

I hit some complexity passing the dongle (which turns up as /dev/ttyACM0) through to the Home Assistant container. First I needed an override file in /etc/systemd/nspawn/hass.nspawn:

[Files]

Bind=/dev/ttyACM0:/dev/zigbee

[Network]

VirtualEthernet=true

(I’m not clear why the VirtualEthernet needed to exist; without it networking broke entirely but I couldn’t see why it worked with no override file.)

A udev rule on the host to change the ownership of the device file so the root user and dialout group in the container could see it was also necessary, so into /etc/udev/rules.d/70-persistent-serial.rules went:

# Zigbee for HASS

SUBSYSTEM=="tty", ATTRS{idVendor}=="0451", ATTRS{idProduct}=="16a8", SYMLINK+="zigbee", \

MODE="660", OWNER="1321926676", GROUP="1321926676"

In the container itself I had to switch PrivateDevices=true to PrivateDevices=false in the home-assistant.service file (which took me a while to figure out; yay for locking things down and then needing to use those locked down things).

Finally I added the hass user to the dialout group. At that point I was able to go and add the integration with Home Assistant, and add the button as a new device. Excellent. I did find I needed a newer version of Home Assistant to get support for the button, however. I was still on 2021.1.5 due to upstream dropping support for Python 3.7 and not being prepared to upgrade to Debian 11 until it was actually released, so the version of zha-quirks didn’t have the correct info. Upgrading to Home Assistant 2021.8.7 sorted that out.

There was another slight problem. Range. Really I want to use the button upstairs. The server is downstairs, and most of my internal walls are brick. The solution turned out to be a TRÅDFRI socket, which replaced the existing ESP8266 wifi socket controlling the stair lights. That was close enough to the server to have a decent signal, and it acts as a Zigbee router so provides a strong enough signal for devices upstairs. The normal approach seems to be to have a lot of Zigbee light bulbs, but I have mostly kept overhead lights as uncontrolled - we don’t use them day to day and it provides a nice fallback if the home automation has issues.

Of course installing Zigbee for a single button would seem to be a bit pointless. So I ordered up a Sonoff door sensor to put on the front door (much smaller than expected - those white boxes on the door are it in the picture above). And I have a 4 gang wireless switch ordered to go on the landing wall upstairs.

Now I’ve got a Zigbee setup there are a few more things I’m thinking of adding, where wifi isn’t an option due to the need for battery operation (monitoring the external gas meter springs to mind). The CC2530 probably isn’t suitable for my needs, as I’ll need to write some custom code to handle the bits I want, but there do seem to be some ARM based devices which might well prove suitable…

Digging into Kubernetes containers

Having build a single node Kubernetes cluster and had a poke at what it’s doing in terms of networking the next thing I want to do is figure out what it’s doing in terms of containers. You might argue this should have come before networking, but to me the networking piece is more non-standard than the container piece, so I wanted to understand that first.

Let’s start with a process listing on the host.

ps faxno user,stat,cmd

There are a number of processes from the host kernel we don’t care about:

kernel processes

USER STAT CMD

0 S [kthreadd]

0 I< \_ [rcu_gp]

0 I< \_ [rcu_par_gp]

0 I< \_ [kworker/0:0H-events_highpri]

0 I< \_ [mm_percpu_wq]

0 S \_ [rcu_tasks_rude_]

0 S \_ [rcu_tasks_trace]

0 S \_ [ksoftirqd/0]

0 I \_ [rcu_sched]

0 S \_ [migration/0]

0 S \_ [cpuhp/0]

0 S \_ [cpuhp/1]

0 S \_ [migration/1]

0 S \_ [ksoftirqd/1]

0 I< \_ [kworker/1:0H-kblockd]

0 S \_ [cpuhp/2]

0 S \_ [migration/2]

0 S \_ [ksoftirqd/2]

0 I< \_ [kworker/2:0H-events_highpri]

0 S \_ [cpuhp/3]

0 S \_ [migration/3]

0 S \_ [ksoftirqd/3]

0 I< \_ [kworker/3:0H-kblockd]

0 S \_ [kdevtmpfs]

0 I< \_ [netns]

0 S \_ [kauditd]

0 S \_ [khungtaskd]

0 S \_ [oom_reaper]

0 I< \_ [writeback]

0 S \_ [kcompactd0]

0 SN \_ [ksmd]

0 SN \_ [khugepaged]

0 I< \_ [kintegrityd]

0 I< \_ [kblockd]

0 I< \_ [blkcg_punt_bio]

0 I< \_ [edac-poller]

0 I< \_ [devfreq_wq]

0 I< \_ [kworker/0:1H-kblockd]

0 S \_ [kswapd0]

0 I< \_ [kthrotld]

0 I< \_ [acpi_thermal_pm]

0 I< \_ [ipv6_addrconf]

0 I< \_ [kstrp]

0 I< \_ [zswap-shrink]

0 I< \_ [kworker/u9:0-hci0]

0 I< \_ [kworker/2:1H-kblockd]

0 I< \_ [ata_sff]

0 I< \_ [sdhci]

0 S \_ [irq/39-mmc0]

0 I< \_ [sdhci]

0 S \_ [irq/42-mmc1]

0 S \_ [scsi_eh_0]

0 I< \_ [scsi_tmf_0]

0 S \_ [scsi_eh_1]

0 I< \_ [scsi_tmf_1]

0 I< \_ [kworker/1:1H-kblockd]

0 I< \_ [kworker/3:1H-kblockd]

0 S \_ [jbd2/sda5-8]

0 I< \_ [ext4-rsv-conver]

0 S \_ [watchdogd]

0 S \_ [scsi_eh_2]

0 I< \_ [scsi_tmf_2]

0 S \_ [usb-storage]

0 I< \_ [cfg80211]

0 S \_ [irq/130-mei_me]

0 I< \_ [cryptd]

0 I< \_ [uas]

0 S \_ [irq/131-iwlwifi]

0 S \_ [card0-crtc0]

0 S \_ [card0-crtc1]

0 S \_ [card0-crtc2]

0 I< \_ [kworker/u9:2-hci0]

0 I \_ [kworker/3:0-events]

0 I \_ [kworker/2:0-events]

0 I \_ [kworker/1:0-events_power_efficient]

0 I \_ [kworker/3:2-events]

0 I \_ [kworker/1:1]

0 I \_ [kworker/u8:1-events_unbound]

0 I \_ [kworker/0:2-events]

0 I \_ [kworker/2:2]

0 I \_ [kworker/u8:0-events_unbound]

0 I \_ [kworker/0:1-events]

0 I \_ [kworker/0:0-events]

There are various basic host processes, including my SSH connections, and Docker. I note it’s using containerd. We also see kubelet, the Kubernetes node agent.

host processes

USER STAT CMD

0 Ss /sbin/init

0 Ss /lib/systemd/systemd-journald

0 Ss /lib/systemd/systemd-udevd

101 Ssl /lib/systemd/systemd-timesyncd

0 Ssl /sbin/dhclient -4 -v -i -pf /run/dhclient.enx00e04c6851de.pid -lf /var/lib/dhcp/dhclient.enx00e04c6851de.leases -I -df /var/lib/dhcp/dhclient6.enx00e04c6851de.leases enx00e04c6851de

0 Ss /usr/sbin/cron -f

104 Ss /usr/bin/dbus-daemon --system --address=systemd: --nofork --nopidfile --systemd-activation --syslog-only

0 Ssl /usr/sbin/dockerd -H fd://

0 Ssl /usr/sbin/rsyslogd -n -iNONE

0 Ss /usr/sbin/smartd -n

0 Ss /lib/systemd/systemd-logind

0 Ssl /usr/bin/containerd

0 Ss+ /sbin/agetty -o -p -- \u --noclear tty1 linux

0 Ss sshd: /usr/sbin/sshd -D [listener] 0 of 10-100 startups

0 Ss \_ sshd: root@pts/1

0 Ss | \_ -bash

0 R+ | \_ ps faxno user,stat,cmd

0 Ss \_ sshd: noodles [priv]

1000 S \_ sshd: noodles@pts/0

1000 Ss+ \_ -bash

0 Ss /lib/systemd/systemd --user

0 S \_ (sd-pam)

1000 Ss /lib/systemd/systemd --user

1000 S \_ (sd-pam)

0 Ssl /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --network-plugin=cni --pod-infra-container-image=k8s.gcr.io/pause:3.4.1

And that just leaves a bunch of container related processes:

container processes

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id fd95c597ff3171ff110b7bf440229e76c5108d5d93be75ffeab54869df734413 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id c2ff2c50f0bc052feda2281741c4f37df7905e3b819294ec645148ae13c3fe1b -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 589c1545d9e0cdf8ea391745c54c8f4db49f5f437b1a2e448e7744b2c12f8856 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 6f417fd8a8c573a2b8f792af08cdcd7ce663457f0f7218c8d55afa3732e6ee94 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id afa9798c9f663b21df8f38d9634469e6b4db0984124547cd472a7789c61ef752 -address /run/containerd/containerd.sock

0 Ssl \_ kube-scheduler --authentication-kubeconfig=/etc/kubernetes/scheduler.conf --authorization-kubeconfig=/etc/kubernetes/scheduler.conf --bind-address=127.0.0.1 --kubeconfig=/etc/kubernetes/scheduler.conf --leader-elect=true --port=0

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 4b3708b62f4d427690f5979848c59fce522dab6c62a9c53b806ffbaef3f88e62 -address /run/containerd/containerd.sock

0 Ssl \_ kube-controller-manager --authentication-kubeconfig=/etc/kubernetes/controller-manager.conf --authorization-kubeconfig=/etc/kubernetes/controller-manager.conf --bind-address=127.0.0.1 --client-ca-file=/etc/kubernetes/pki/ca.crt --cluster-name=kubernetes --cluster-signing-cert-file=/etc/kubernetes/pki/ca.crt --cluster-signing-key-file=/etc/kubernetes/pki/ca.key --controllers=*,bootstrapsigner,tokencleaner --kubeconfig=/etc/kubernetes/controller-manager.conf --leader-elect=true --port=0 --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt --root-ca-file=/etc/kubernetes/pki/ca.crt --service-account-private-key-file=/etc/kubernetes/pki/sa.key --use-service-account-credentials=true

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 89f35bf7a825eb97db7035d29aa475a3a1c8aaccda0860a46388a3a923cd10bc -address /run/containerd/containerd.sock

0 Ssl \_ kube-apiserver --advertise-address=192.168.53.147 --allow-privileged=true --authorization-mode=Node,RBAC --client-ca-file=/etc/kubernetes/pki/ca.crt --enable-admission-plugins=NodeRestriction --enable-bootstrap-token-auth=true --etcd-cafile=/etc/kubernetes/pki/etcd/ca.crt --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key --etcd-servers=https://127.0.0.1:2379 --insecure-port=0 --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.crt --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client.key --requestheader-allowed-names=front-proxy-client --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt --requestheader-extra-headers-prefix=X-Remote-Extra- --requestheader-group-headers=X-Remote-Group --requestheader-username-headers=X-Remote-User --secure-port=6443 --service-account-issuer=https://kubernetes.default.svc.cluster.local --service-account-key-file=/etc/kubernetes/pki/sa.pub --service-account-signing-key-file=/etc/kubernetes/pki/sa.key --service-cluster-ip-range=10.96.0.0/12 --tls-cert-file=/etc/kubernetes/pki/apiserver.crt --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 2dabff6e4f59c96d931d95781d28314065b46d0e6f07f8c65dc52aa465f69456 -address /run/containerd/containerd.sock

0 Ssl \_ etcd --advertise-client-urls=https://192.168.53.147:2379 --cert-file=/etc/kubernetes/pki/etcd/server.crt --client-cert-auth=true --data-dir=/var/lib/etcd --initial-advertise-peer-urls=https://192.168.53.147:2380 --initial-cluster=udon=https://192.168.53.147:2380 --key-file=/etc/kubernetes/pki/etcd/server.key --listen-client-urls=https://127.0.0.1:2379,https://192.168.53.147:2379 --listen-metrics-urls=http://127.0.0.1:2381 --listen-peer-urls=https://192.168.53.147:2380 --name=udon --peer-cert-file=/etc/kubernetes/pki/etcd/peer.crt --peer-client-cert-auth=true --peer-key-file=/etc/kubernetes/pki/etcd/peer.key --peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt --snapshot-count=10000 --trusted-ca-file=/etc/kubernetes/pki/etcd/ca.crt

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 73fae81715b670255b66419a7959798b287be7bbb41e96f8b711fa529aa02f0d -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 26d92a720c560caaa5f8a0217bc98e486b1c032af6c7c5d75df508021d462878 -address /run/containerd/containerd.sock

0 Ssl \_ /usr/local/bin/kube-proxy --config=/var/lib/kube-proxy/config.conf --hostname-override=udon

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 7104f65b5d92a56a2df93514ed0a78cfd1090ca47b6ce4e0badc43be6c6c538e -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 48d735f7f44e3944851563f03f32c60811f81409e7378641404035dffd8c1eb4 -address /run/containerd/containerd.sock

0 Ssl \_ /usr/bin/weave-npc

0 S< \_ /usr/sbin/ulogd -v

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 36b418e69ae7076fe5a44d16cef223d8908016474cb65910f2fd54cca470566b -address /run/containerd/containerd.sock

0 Ss \_ /bin/sh /home/weave/launch.sh

0 Sl \_ /home/weave/weaver --port=6783 --datapath=datapath --name=12:82:8f:ed:c7:bf --http-addr=127.0.0.1:6784 --metrics-addr=0.0.0.0:6782 --docker-api= --no-dns --db-prefix=/weavedb/weave-net --ipalloc-range=192.168.0.0/24 --nickname=udon --ipalloc-init consensus=0 --conn-limit=200 --expect-npc --no-masq-local

0 Sl \_ /home/weave/kube-utils -run-reclaim-daemon -node-name=udon -peer-name=12:82:8f:ed:c7:bf -log-level=debug

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 534c0a698478599277482d97a137fab8ef4d62db8a8a5cf011b4bead28246f70 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 9ffd6b668ddfbf3c64c6783bc6f4f6cc9e92bfb16c83fb214c2cbb4044993bf0 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 4a30785f91873a7e6a191e86928a789760a054e4fa6dcd7048a059b42cf19edf -address /run/containerd/containerd.sock

0 Ssl \_ /coredns -conf /etc/coredns/Corefile

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 649a507d45831aca1de5231b49afc8ff37d90add813e7ecd451d12eedd785b0c -address /run/containerd/containerd.sock

0 Ssl \_ /coredns -conf /etc/coredns/Corefile

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 62b369de8d8cece4d33ec9fda4d23a9718379a8df8b30173d68f20bff830fed2 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 7cbb177bee18dbdeed21fb90e74378e2081436ad5bf116b36ad5077fe382df30 -address /run/containerd/containerd.sock

0 Ss \_ /bin/bash /usr/local/bin/run.sh

0 S \_ nginx: master process nginx -g daemon off;

65534 S \_ nginx: worker process

0 Ss /lib/systemd/systemd --user

0 S \_ (sd-pam)

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id 6669168db70db4e6c741e8a047942af06dd745fae4d594291d1d6e1077b05082 -address /run/containerd/containerd.sock

0 Ss \_ /pause

0 Sl /usr/bin/containerd-shim-runc-v2 -namespace moby -id d5fa78fa31f11a4c5fb9fd2e853a00f0e60e414a7bce2e0d8fcd1f6ab2b30074 -address /run/containerd/containerd.sock

101 Ss \_ /usr/bin/dumb-init -- /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443 --validating-webhook-certificate=/usr/local/certificates/cert --validating-webhook-key=/usr/local/certificates/key

101 Ssl \_ /nginx-ingress-controller --publish-service=ingress-nginx/ingress-nginx-controller --election-id=ingress-controller-leader --ingress-class=nginx --configmap=ingress-nginx/ingress-nginx-controller --validating-webhook=:8443 --validating-webhook-certificate=/usr/local/certificates/cert --validating-webhook-key=/usr/local/certificates/key

101 S \_ nginx: master process /usr/local/nginx/sbin/nginx -c /etc/nginx/nginx.conf

101 Sl \_ nginx: worker process

101 Sl \_ nginx: worker process

101 Sl \_ nginx: worker process

101 Sl \_ nginx: worker process

101 S \_ nginx: cache manager process

There’s a lot going on there. Some bits are obvious; we can see the nginx ingress controller, our echoserver (the other nginx process hanging off /usr/local/bin/run.sh), and some things that look related to weave. The rest appears to be Kubernete’s related infrastructure.

kube-scheduler, kube-controller-manager, kube-apiserver, kube-proxy all look like core Kubernetes bits. etcd is a distributed, reliable key-value store. coredns is a DNS server, with plugins for Kubernetes and etcd.

What does Docker claim is happening?

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d5fa78fa31f1 k8s.gcr.io/ingress-nginx/controller "/usr/bin/dumb-init …" 3 days ago Up 3 days k8s_controller_ingress-nginx-controller-5b74bc9868-bczdr_ingress-nginx_4d7d3d81-a769-4de9-a4fb-04763b7c1605_0

6669168db70d k8s.gcr.io/pause:3.4.1 "/pause" 3 days ago Up 3 days k8s_POD_ingress-nginx-controller-5b74bc9868-bczdr_ingress-nginx_4d7d3d81-a769-4de9-a4fb-04763b7c1605_0

7cbb177bee18 k8s.gcr.io/echoserver "/usr/local/bin/run.…" 3 days ago Up 3 days k8s_echoserver_hello-node-59bffcc9fd-8hkgb_default_c7111c9e-7131-40e0-876d-be89d5ca1812_0

62b369de8d8c k8s.gcr.io/pause:3.4.1 "/pause" 3 days ago Up 3 days k8s_POD_hello-node-59bffcc9fd-8hkgb_default_c7111c9e-7131-40e0-876d-be89d5ca1812_0

649a507d4583 296a6d5035e2 "/coredns -conf /etc…" 4 days ago Up 4 days k8s_coredns_coredns-558bd4d5db-flrfq_kube-system_f8b2b52e-6673-4966-82b1-3fbe052a0297_0

4a30785f9187 296a6d5035e2 "/coredns -conf /etc…" 4 days ago Up 4 days k8s_coredns_coredns-558bd4d5db-4nvrg_kube-system_1976f4d6-647c-45ca-b268-95f071f064d5_0

9ffd6b668ddf k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_coredns-558bd4d5db-flrfq_kube-system_f8b2b52e-6673-4966-82b1-3fbe052a0297_0

534c0a698478 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_coredns-558bd4d5db-4nvrg_kube-system_1976f4d6-647c-45ca-b268-95f071f064d5_0

36b418e69ae7 df29c0a4002c "/home/weave/launch.…" 4 days ago Up 4 days k8s_weave_weave-net-mchmg_kube-system_b9af9615-8cde-4a18-8555-6da1f51b7136_1

48d735f7f44e weaveworks/weave-npc "/usr/bin/launch.sh" 4 days ago Up 4 days k8s_weave-npc_weave-net-mchmg_kube-system_b9af9615-8cde-4a18-8555-6da1f51b7136_0

7104f65b5d92 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_weave-net-mchmg_kube-system_b9af9615-8cde-4a18-8555-6da1f51b7136_0

26d92a720c56 4359e752b596 "/usr/local/bin/kube…" 4 days ago Up 4 days k8s_kube-proxy_kube-proxy-6d8kg_kube-system_8bf2d7ec-4850-427f-860f-465a9ff84841_0

73fae81715b6 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_kube-proxy-6d8kg_kube-system_8bf2d7ec-4850-427f-860f-465a9ff84841_0

89f35bf7a825 771ffcf9ca63 "kube-apiserver --ad…" 4 days ago Up 4 days k8s_kube-apiserver_kube-apiserver-udon_kube-system_1af8c5f362b7b02269f4d244cb0e6fbf_0

afa9798c9f66 a4183b88f6e6 "kube-scheduler --au…" 4 days ago Up 4 days k8s_kube-scheduler_kube-scheduler-udon_kube-system_629dc49dfd9f7446eb681f1dcffe6d74_0

2dabff6e4f59 0369cf4303ff "etcd --advertise-cl…" 4 days ago Up 4 days k8s_etcd_etcd-udon_kube-system_c2a3008c1d9895f171cd394e38656ea0_0

4b3708b62f4d e16544fd47b0 "kube-controller-man…" 4 days ago Up 4 days k8s_kube-controller-manager_kube-controller-manager-udon_kube-system_1d1b9018c3c6e7aa2e803c6e9ccd2eab_0

fd95c597ff31 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_kube-scheduler-udon_kube-system_629dc49dfd9f7446eb681f1dcffe6d74_0

589c1545d9e0 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_kube-controller-manager-udon_kube-system_1d1b9018c3c6e7aa2e803c6e9ccd2eab_0

6f417fd8a8c5 k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_kube-apiserver-udon_kube-system_1af8c5f362b7b02269f4d244cb0e6fbf_0

c2ff2c50f0bc k8s.gcr.io/pause:3.4.1 "/pause" 4 days ago Up 4 days k8s_POD_etcd-udon_kube-system_c2a3008c1d9895f171cd394e38656ea0_0

Ok, that’s interesting. Before we dig into it, what does Kubernetes say? (I’ve trimmed the RESTARTS + AGE columns to make things fit a bit better here; they weren’t interesting).

noodles@udon:~$ kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS

default hello-node-59bffcc9fd-8hkgb 1/1 Running

ingress-nginx ingress-nginx-admission-create-8jgkt 0/1 Completed

ingress-nginx ingress-nginx-admission-patch-jdq4t 0/1 Completed

ingress-nginx ingress-nginx-controller-5b74bc9868-bczdr 1/1 Running

kube-system coredns-558bd4d5db-4nvrg 1/1 Running

kube-system coredns-558bd4d5db-flrfq 1/1 Running

kube-system etcd-udon 1/1 Running

kube-system kube-apiserver-udon 1/1 Running

kube-system kube-controller-manager-udon 1/1 Running

kube-system kube-proxy-6d8kg 1/1 Running

kube-system kube-scheduler-udon 1/1 Running

kube-system weave-net-mchmg 2/2 Running

So there are a lot more Docker instances running than Kubernetes pods. What’s happening there? Well, it turns out that Kubernetes builds pods from multiple different Docker instances. If you think of a traditional container as being comprised of a set of namespaces (process, network, hostname etc) and a cgroup then a pod is made up of the namespaces and then each docker instance within that pod has it’s own cgroup. Ian Lewis has a much deeper discussion in What are Kubernetes Pods Anyway?, but my takeaway is that a pod is a set of sort-of containers that are coupled. We can see this more clearly if we ask systemd for the cgroup breakdown:

systemd-cgls

Control group /:

-.slice

├─user.slice

│ ├─user-0.slice

│ │ ├─session-29.scope

│ │ │ ├─ 515899 sshd: root@pts/1

│ │ │ ├─ 515913 -bash

│ │ │ ├─3519743 systemd-cgls

│ │ │ └─3519744 cat

│ │ └─user@0.service …

│ │ └─init.scope

│ │ ├─515902 /lib/systemd/systemd --user

│ │ └─515903 (sd-pam)

│ └─user-1000.slice

│ ├─user@1000.service …

│ │ └─init.scope

│ │ ├─2564011 /lib/systemd/systemd --user

│ │ └─2564012 (sd-pam)

│ └─session-110.scope

│ ├─2564007 sshd: noodles [priv]

│ ├─2564040 sshd: noodles@pts/0

│ └─2564041 -bash

├─init.scope

│ └─1 /sbin/init

├─system.slice

│ ├─containerd.service …

│ │ ├─ 21383 /usr/bin/containerd-shim-runc-v2 -namespace moby -id fd95c597ff31…

│ │ ├─ 21408 /usr/bin/containerd-shim-runc-v2 -namespace moby -id c2ff2c50f0bc…

│ │ ├─ 21432 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 589c1545d9e0…

│ │ ├─ 21459 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 6f417fd8a8c5…

│ │ ├─ 21582 /usr/bin/containerd-shim-runc-v2 -namespace moby -id afa9798c9f66…

│ │ ├─ 21607 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 4b3708b62f4d…

│ │ ├─ 21640 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 89f35bf7a825…

│ │ ├─ 21648 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 2dabff6e4f59…

│ │ ├─ 22343 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 73fae81715b6…

│ │ ├─ 22391 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 26d92a720c56…

│ │ ├─ 26992 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 7104f65b5d92…

│ │ ├─ 27405 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 48d735f7f44e…

│ │ ├─ 27531 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 36b418e69ae7…

│ │ ├─ 27941 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 534c0a698478…

│ │ ├─ 27960 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 9ffd6b668ddf…

│ │ ├─ 28131 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 4a30785f9187…

│ │ ├─ 28159 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 649a507d4583…

│ │ ├─ 514667 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 62b369de8d8c…

│ │ ├─ 514976 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 7cbb177bee18…

│ │ ├─ 698904 /usr/bin/containerd-shim-runc-v2 -namespace moby -id 6669168db70d…

│ │ ├─ 699284 /usr/bin/containerd-shim-runc-v2 -namespace moby -id d5fa78fa31f1…

│ │ └─2805479 /usr/bin/containerd

│ ├─systemd-udevd.service

│ │ └─2805502 /lib/systemd/systemd-udevd

│ ├─cron.service

│ │ └─2805474 /usr/sbin/cron -f

│ ├─docker.service …

│ │ └─528 /usr/sbin/dockerd -H fd://

│ ├─kubelet.service

│ │ └─2805501 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap…

│ ├─systemd-journald.service

│ │ └─2805505 /lib/systemd/systemd-journald

│ ├─ssh.service

│ │ └─2805500 sshd: /usr/sbin/sshd -D [listener] 0 of 10-100 startups

│ ├─ifup@enx00e04c6851de.service

│ │ └─2805675 /sbin/dhclient -4 -v -i -pf /run/dhclient.enx00e04c6851de.pid -lf…

│ ├─rsyslog.service

│ │ └─2805488 /usr/sbin/rsyslogd -n -iNONE

│ ├─smartmontools.service

│ │ └─2805499 /usr/sbin/smartd -n

│ ├─dbus.service

│ │ └─527 /usr/bin/dbus-daemon --system --address=systemd: --nofork --nopidfile…

│ ├─systemd-timesyncd.service

│ │ └─2805513 /lib/systemd/systemd-timesyncd

│ ├─system-getty.slice

│ │ └─getty@tty1.service

│ │ └─536 /sbin/agetty -o -p -- \u --noclear tty1 linux

│ └─systemd-logind.service

│ └─533 /lib/systemd/systemd-logind

└─kubepods.slice

├─kubepods-burstable.slice

│ ├─kubepods-burstable-pod1af8c5f362b7b02269f4d244cb0e6fbf.slice

│ │ ├─docker-6f417fd8a8c573a2b8f792af08cdcd7ce663457f0f7218c8d55afa3732e6ee94.scope …

│ │ │ └─21493 /pause

│ │ └─docker-89f35bf7a825eb97db7035d29aa475a3a1c8aaccda0860a46388a3a923cd10bc.scope …

│ │ └─21699 kube-apiserver --advertise-address=192.168.33.147 --allow-privi…

│ ├─kubepods-burstable-podf8b2b52e_6673_4966_82b1_3fbe052a0297.slice

│ │ ├─docker-649a507d45831aca1de5231b49afc8ff37d90add813e7ecd451d12eedd785b0c.scope …

│ │ │ └─28187 /coredns -conf /etc/coredns/Corefile

│ │ └─docker-9ffd6b668ddfbf3c64c6783bc6f4f6cc9e92bfb16c83fb214c2cbb4044993bf0.scope …

│ │ └─27987 /pause

│ ├─kubepods-burstable-podc2a3008c1d9895f171cd394e38656ea0.slice

│ │ ├─docker-c2ff2c50f0bc052feda2281741c4f37df7905e3b819294ec645148ae13c3fe1b.scope …

│ │ │ └─21481 /pause

│ │ └─docker-2dabff6e4f59c96d931d95781d28314065b46d0e6f07f8c65dc52aa465f69456.scope …

│ │ └─21701 etcd --advertise-client-urls=https://192.168.33.147:2379 --cert…

│ ├─kubepods-burstable-pod629dc49dfd9f7446eb681f1dcffe6d74.slice

│ │ ├─docker-fd95c597ff3171ff110b7bf440229e76c5108d5d93be75ffeab54869df734413.scope …

│ │ │ └─21491 /pause

│ │ └─docker-afa9798c9f663b21df8f38d9634469e6b4db0984124547cd472a7789c61ef752.scope …

│ │ └─21680 kube-scheduler --authentication-kubeconfig=/etc/kubernetes/sche…

│ ├─kubepods-burstable-podb9af9615_8cde_4a18_8555_6da1f51b7136.slice

│ │ ├─docker-48d735f7f44e3944851563f03f32c60811f81409e7378641404035dffd8c1eb4.scope …

│ │ │ ├─27424 /usr/bin/weave-npc

│ │ │ └─27458 /usr/sbin/ulogd -v

│ │ ├─docker-36b418e69ae7076fe5a44d16cef223d8908016474cb65910f2fd54cca470566b.scope …

│ │ │ ├─27549 /bin/sh /home/weave/launch.sh

│ │ │ ├─27629 /home/weave/weaver --port=6783 --datapath=datapath --name=12:82…

│ │ │ └─27825 /home/weave/kube-utils -run-reclaim-daemon -node-name=udon -pee…

│ │ └─docker-7104f65b5d92a56a2df93514ed0a78cfd1090ca47b6ce4e0badc43be6c6c538e.scope …

│ │ └─27011 /pause

│ ├─kubepods-burstable-pod4d7d3d81_a769_4de9_a4fb_04763b7c1605.slice

│ │ ├─docker-6669168db70db4e6c741e8a047942af06dd745fae4d594291d1d6e1077b05082.scope …

│ │ │ └─698925 /pause

│ │ └─docker-d5fa78fa31f11a4c5fb9fd2e853a00f0e60e414a7bce2e0d8fcd1f6ab2b30074.scope …

│ │ ├─ 699303 /usr/bin/dumb-init -- /nginx-ingress-controller --publish-ser…

│ │ ├─ 699316 /nginx-ingress-controller --publish-service=ingress-nginx/ing…

│ │ ├─ 699405 nginx: master process /usr/local/nginx/sbin/nginx -c /etc/ngi…

│ │ ├─1075085 nginx: worker process

│ │ ├─1075086 nginx: worker process

│ │ ├─1075087 nginx: worker process

│ │ ├─1075088 nginx: worker process

│ │ └─1075089 nginx: cache manager process

│ ├─kubepods-burstable-pod1976f4d6_647c_45ca_b268_95f071f064d5.slice

│ │ ├─docker-4a30785f91873a7e6a191e86928a789760a054e4fa6dcd7048a059b42cf19edf.scope …

│ │ │ └─28178 /coredns -conf /etc/coredns/Corefile

│ │ └─docker-534c0a698478599277482d97a137fab8ef4d62db8a8a5cf011b4bead28246f70.scope …

│ │ └─27995 /pause

│ └─kubepods-burstable-pod1d1b9018c3c6e7aa2e803c6e9ccd2eab.slice

│ ├─docker-589c1545d9e0cdf8ea391745c54c8f4db49f5f437b1a2e448e7744b2c12f8856.scope …

│ │ └─21489 /pause

│ └─docker-4b3708b62f4d427690f5979848c59fce522dab6c62a9c53b806ffbaef3f88e62.scope …

│ └─21690 kube-controller-manager --authentication-kubeconfig=/etc/kubern…

└─kubepods-besteffort.slice

├─kubepods-besteffort-podc7111c9e_7131_40e0_876d_be89d5ca1812.slice

│ ├─docker-62b369de8d8cece4d33ec9fda4d23a9718379a8df8b30173d68f20bff830fed2.scope …

│ │ └─514688 /pause

│ └─docker-7cbb177bee18dbdeed21fb90e74378e2081436ad5bf116b36ad5077fe382df30.scope …

│ ├─514999 /bin/bash /usr/local/bin/run.sh

│ ├─515039 nginx: master process nginx -g daemon off;

│ └─515040 nginx: worker process

└─kubepods-besteffort-pod8bf2d7ec_4850_427f_860f_465a9ff84841.slice

├─docker-73fae81715b670255b66419a7959798b287be7bbb41e96f8b711fa529aa02f0d.scope …

│ └─22364 /pause

└─docker-26d92a720c560caaa5f8a0217bc98e486b1c032af6c7c5d75df508021d462878.scope …

└─22412 /usr/local/bin/kube-proxy --config=/var/lib/kube-proxy/config.c…

Again, there’s a lot going on here, but if you look for the kubepods.slice piece then you can see our pods are divided into two sets, kubepods-burstable.slice and kubepods-besteffort.slice. Under those you can see the individual pods, all of which have at least 2 separate cgroups, one of which is running /pause. Turns out this is a generic Kubernetes image which basically performs the process reaping that an init process would do on a normal system; it just sits and waits for processes to exit and cleans them up. Again, Ian Lewis has more details on the pause container.

Finally let’s dig into the actual containers. The pause container seems like a good place to start. We can examine the details of where the filesystem is (may differ if you’re not using the overlay2 image thingy). The hex string is the container ID listed by docker ps.

# docker inspect --format='{{.GraphDriver.Data.MergedDir}}' 6669168db70d

/var/lib/docker/overlay2/5a2d76012476349e6b58eb6a279bac400968cefae8537082ea873b2e791ff3c6/merged

# cd /var/lib/docker/overlay2/5a2d76012476349e6b58eb6a279bac400968cefae8537082ea873b2e791ff3c6/merged

# find . | sed -e 's;^./;;'

pause

proc

.dockerenv

etc

etc/resolv.conf

etc/hostname

etc/mtab

etc/hosts

sys

dev

dev/shm

dev/pts

dev/console

# file pause

pause: ELF 64-bit LSB executable, x86-64, version 1 (GNU/Linux), statically linked, for GNU/Linux 3.2.0, BuildID[sha1]=d35dab7152881e37373d819f6864cd43c0124a65, stripped

This is a nice, minimal container. The pause binary is statically linked, so there are no extra libraries required and it’s just a basic set of support devices and files. I doubt the pieces in /etc are even required. Let’s try the echoserver next:

# docker inspect --format='{{.GraphDriver.Data.MergedDir}}' 7cbb177bee18

/var/lib/docker/overlay2/09042bc1aff16a9cba43f1a6a68f7786c4748e989a60833ec7417837c4bfaacb/merged

# cd /var/lib/docker/overlay2/09042bc1aff16a9cba43f1a6a68f7786c4748e989a60833ec7417837c4bfaacb/merged

# find . | wc -l

3358

Wow. That’s a lot more stuff. Poking /etc/os-release shows why:

# grep PRETTY etc/os-release

PRETTY_NAME="Ubuntu 16.04.2 LTS"

Aha. It’s an Ubuntu-based image. We can cut straight to the chase with the nginx ingress container:

# docker exec d5fa78fa31f1 grep PRETTY /etc/os-release

PRETTY_NAME="Alpine Linux v3.13"

That’s a bit more reasonable an image for a container; Alpine Linux is a much smaller distro.

I don’t feel there’s a lot more poking to do here. It’s not something I’d expect to do on a normal Kubernetes setup, but I wanted to dig under the hood to make sure it really was just a normal container situation. I think the next steps involve adding a bit more complexity - that means building a pod with more than a single node, and then running an application that’s a bit more complicated. That should help explore two major advantages of running this sort of setup; resilency from a node dying, and the ability to scale out beyond what a single node can do.

Trying to understand Kubernetes networking

I previously built a single node Kubernetes cluster as a test environment to learn more about it. The first thing I want to try to understand is its networking. In particular the IP addresses that are listed are all 10.* and my host’s network is a 192.168/24. I understand each pod gets its own virtual ethernet interface and associated IP address, and these are generally private within the cluster (and firewalled out other than for exposed services). What does that actually look like?

$ ip route

default via 192.168.53.1 dev enx00e04c6851de

172.17.0.0/16 dev docker0 proto kernel scope link src 172.17.0.1 linkdown

192.168.0.0/24 dev weave proto kernel scope link src 192.168.0.1

192.168.53.0/24 dev enx00e04c6851de proto kernel scope link src 192.168.53.147

Huh. No sign of any way to get to 10.107.66.138 (the IP my echoserver from the previous post is available on directly from the host). What about network interfaces? (under the cut because it’s lengthy)

ip addr

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

2: enx00e04c6851de: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:e0:4c:68:51:de brd ff:ff:ff:ff:ff:ff

inet 192.168.53.147/24 brd 192.168.53.255 scope global dynamic enx00e04c6851de

valid_lft 41571sec preferred_lft 41571sec

3: wlp1s0: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 74:d8:3e:70:3b:18 brd ff:ff:ff:ff:ff:ff

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:18:04:9e:08 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

5: datapath: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue state UNKNOWN group default qlen 1000

link/ether d2:5a:fd:c1:56:23 brd ff:ff:ff:ff:ff:ff

7: weave: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue state UP group default qlen 1000

link/ether 12:82:8f:ed:c7:bf brd ff:ff:ff:ff:ff:ff

inet 192.168.0.1/24 brd 192.168.0.255 scope global weave

valid_lft forever preferred_lft forever

9: vethwe-datapath@vethwe-bridge: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master datapath state UP group default

link/ether b6:49:88:d6:6d:84 brd ff:ff:ff:ff:ff:ff

10: vethwe-bridge@vethwe-datapath: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master weave state UP group default

link/ether 6e:6c:03:1d:e5:0e brd ff:ff:ff:ff:ff:ff

11: vxlan-6784: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 65535 qdisc noqueue master datapath state UNKNOWN group default qlen 1000

link/ether 9a:af:c5:0a:b3:fd brd ff:ff:ff:ff:ff:ff

13: vethwepl534c0a6@if12: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master weave state UP group default

link/ether 1e:ac:f1:85:61:9a brd ff:ff:ff:ff:ff:ff link-netnsid 0

15: vethwepl9ffd6b6@if14: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master weave state UP group default

link/ether 56:ca:71:2a:ab:39 brd ff:ff:ff:ff:ff:ff link-netnsid 1

17: vethwepl62b369d@if16: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master weave state UP group default

link/ether e2:a0:bb:ee:fc:73 brd ff:ff:ff:ff:ff:ff link-netnsid 2

23: vethwepl6669168@if22: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1376 qdisc noqueue master weave state UP group default

link/ether f2:e7:e6:95:e0:61 brd ff:ff:ff:ff:ff:ff link-netnsid 3

That looks like a collection of virtual ethernet devices that are being managed by the weave networking plugin, and presumably partnered inside each pod. They’re bridged to the weave interface (the master weave bit). Still no clues about the 10.* range. What about ARP?

ip neigh

192.168.53.1 dev enx00e04c6851de lladdr e4:8d:8c:35:98:d5 DELAY

192.168.0.4 dev datapath lladdr da:22:06:96:50:cb STALE

192.168.0.2 dev weave lladdr 66:eb:ce:16:3c:62 REACHABLE

192.168.53.136 dev enx00e04c6851de lladdr 00:e0:4c:39:f2:54 REACHABLE

192.168.0.6 dev weave lladdr 56:a9:f0:d2:9e:f3 STALE

192.168.0.3 dev datapath lladdr f2:42:c9:c3:08:71 STALE

192.168.0.3 dev weave lladdr f2:42:c9:c3:08:71 REACHABLE

192.168.0.2 dev datapath lladdr 66:eb:ce:16:3c:62 STALE

192.168.0.6 dev datapath lladdr 56:a9:f0:d2:9e:f3 STALE

192.168.0.4 dev weave lladdr da:22:06:96:50:cb STALE

192.168.0.5 dev datapath lladdr fe:6f:1b:14:56:5a STALE

192.168.0.5 dev weave lladdr fe:6f:1b:14:56:5a REACHABLE

Nope. That just looks like addresses on the weave managed bridge. Alright. What about firewalling?

nft list ruleset

table ip nat {

chain DOCKER {

iifname "docker0" counter packets 0 bytes 0 return

}

chain POSTROUTING {

type nat hook postrouting priority srcnat; policy accept;

counter packets 531750 bytes 31913539 jump KUBE-POSTROUTING

oifname != "docker0" ip saddr 172.17.0.0/16 counter packets 1 bytes 84 masquerade

counter packets 525600 bytes 31544134 jump WEAVE

}

chain PREROUTING {

type nat hook prerouting priority dstnat; policy accept;

counter packets 180 bytes 12525 jump KUBE-SERVICES

fib daddr type local counter packets 23 bytes 1380 jump DOCKER

}

chain OUTPUT {

type nat hook output priority -100; policy accept;

counter packets 527005 bytes 31628455 jump KUBE-SERVICES

ip daddr != 127.0.0.0/8 fib daddr type local counter packets 285425 bytes 17125524 jump DOCKER

}

chain KUBE-MARK-DROP {

counter packets 0 bytes 0 meta mark set mark or 0x8000

}

chain KUBE-MARK-MASQ {

counter packets 0 bytes 0 meta mark set mark or 0x4000

}

chain KUBE-POSTROUTING {

mark and 0x4000 != 0x4000 counter packets 4622 bytes 277720 return

counter packets 0 bytes 0 meta mark set mark xor 0x4000

counter packets 0 bytes 0 masquerade

}

chain KUBE-KUBELET-CANARY {

}

chain INPUT {

type nat hook input priority 100; policy accept;

}

chain KUBE-PROXY-CANARY {

}

chain KUBE-SERVICES {

meta l4proto tcp ip daddr 10.96.0.10 tcp dport 9153 counter packets 0 bytes 0 jump KUBE-SVC-JD5MR3NA4I4DYORP

meta l4proto tcp ip daddr 10.107.66.138 tcp dport 8080 counter packets 1 bytes 60 jump KUBE-SVC-666FUMINWJLRRQPD

meta l4proto tcp ip daddr 10.111.16.129 tcp dport 443 counter packets 0 bytes 0 jump KUBE-SVC-EZYNCFY2F7N6OQA2

meta l4proto tcp ip daddr 10.96.9.41 tcp dport 443 counter packets 0 bytes 0 jump KUBE-SVC-EDNDUDH2C75GIR6O

meta l4proto tcp ip daddr 192.168.53.147 tcp dport 443 counter packets 0 bytes 0 jump KUBE-XLB-EDNDUDH2C75GIR6O

meta l4proto tcp ip daddr 10.96.9.41 tcp dport 80 counter packets 0 bytes 0 jump KUBE-SVC-CG5I4G2RS3ZVWGLK

meta l4proto tcp ip daddr 192.168.53.147 tcp dport 80 counter packets 0 bytes 0 jump KUBE-XLB-CG5I4G2RS3ZVWGLK

meta l4proto tcp ip daddr 10.96.0.1 tcp dport 443 counter packets 0 bytes 0 jump KUBE-SVC-NPX46M4PTMTKRN6Y

meta l4proto udp ip daddr 10.96.0.10 udp dport 53 counter packets 0 bytes 0 jump KUBE-SVC-TCOU7JCQXEZGVUNU

meta l4proto tcp ip daddr 10.96.0.10 tcp dport 53 counter packets 0 bytes 0 jump KUBE-SVC-ERIFXISQEP7F7OF4

fib daddr type local counter packets 3312 bytes 198720 jump KUBE-NODEPORTS

}

chain KUBE-NODEPORTS {

meta l4proto tcp tcp dport 31529 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp tcp dport 31529 counter packets 0 bytes 0 jump KUBE-SVC-666FUMINWJLRRQPD

meta l4proto tcp ip saddr 127.0.0.0/8 tcp dport 30894 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp tcp dport 30894 counter packets 0 bytes 0 jump KUBE-XLB-EDNDUDH2C75GIR6O

meta l4proto tcp ip saddr 127.0.0.0/8 tcp dport 32740 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp tcp dport 32740 counter packets 0 bytes 0 jump KUBE-XLB-CG5I4G2RS3ZVWGLK

}

chain KUBE-SVC-NPX46M4PTMTKRN6Y {

counter packets 0 bytes 0 jump KUBE-SEP-Y6PHKONXBG3JINP2

}

chain KUBE-SEP-Y6PHKONXBG3JINP2 {

ip saddr 192.168.53.147 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.53.147:6443

}

chain WEAVE {

# match-set weaver-no-masq-local dst counter packets 135966 bytes 8160820 return

ip saddr 192.168.0.0/24 ip daddr 224.0.0.0/4 counter packets 0 bytes 0 return

ip saddr != 192.168.0.0/24 ip daddr 192.168.0.0/24 counter packets 0 bytes 0 masquerade

ip saddr 192.168.0.0/24 ip daddr != 192.168.0.0/24 counter packets 33 bytes 2941 masquerade

}

chain WEAVE-CANARY {

}

chain KUBE-SVC-JD5MR3NA4I4DYORP {

counter packets 0 bytes 0 jump KUBE-SEP-6JI23ZDEH4VLR5EN

counter packets 0 bytes 0 jump KUBE-SEP-FATPLMAF37ZNQP5P

}

chain KUBE-SEP-6JI23ZDEH4VLR5EN {

ip saddr 192.168.0.2 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.2:9153

}

chain KUBE-SVC-TCOU7JCQXEZGVUNU {

counter packets 0 bytes 0 jump KUBE-SEP-JTN4UBVS7OG5RONX

counter packets 0 bytes 0 jump KUBE-SEP-4TCKAEJ6POVEFPVW

}

chain KUBE-SEP-JTN4UBVS7OG5RONX {

ip saddr 192.168.0.2 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto udp counter packets 0 bytes 0 dnat to 192.168.0.2:53

}

chain KUBE-SVC-ERIFXISQEP7F7OF4 {

counter packets 0 bytes 0 jump KUBE-SEP-UPZX2EM3TRFH2ASL

counter packets 0 bytes 0 jump KUBE-SEP-KPHYKKPVMB473Z76

}

chain KUBE-SEP-UPZX2EM3TRFH2ASL {

ip saddr 192.168.0.2 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.2:53

}

chain KUBE-SEP-4TCKAEJ6POVEFPVW {

ip saddr 192.168.0.3 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto udp counter packets 0 bytes 0 dnat to 192.168.0.3:53

}

chain KUBE-SEP-KPHYKKPVMB473Z76 {

ip saddr 192.168.0.3 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.3:53

}

chain KUBE-SEP-FATPLMAF37ZNQP5P {

ip saddr 192.168.0.3 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.3:9153

}

chain KUBE-SVC-666FUMINWJLRRQPD {

counter packets 1 bytes 60 jump KUBE-SEP-LYLDBZYLHY4MT3AQ

}

chain KUBE-SEP-LYLDBZYLHY4MT3AQ {

ip saddr 192.168.0.4 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 1 bytes 60 dnat to 192.168.0.4:8080

}

chain KUBE-XLB-EDNDUDH2C75GIR6O {

fib saddr type local counter packets 0 bytes 0 jump KUBE-MARK-MASQ

fib saddr type local counter packets 0 bytes 0 jump KUBE-SVC-EDNDUDH2C75GIR6O

counter packets 0 bytes 0 jump KUBE-SEP-BLQHCYCSXY3NRKLC

}

chain KUBE-XLB-CG5I4G2RS3ZVWGLK {

fib saddr type local counter packets 0 bytes 0 jump KUBE-MARK-MASQ

fib saddr type local counter packets 0 bytes 0 jump KUBE-SVC-CG5I4G2RS3ZVWGLK

counter packets 0 bytes 0 jump KUBE-SEP-5XVRKWM672JGTWXH

}

chain KUBE-SVC-EDNDUDH2C75GIR6O {

counter packets 0 bytes 0 jump KUBE-SEP-BLQHCYCSXY3NRKLC

}

chain KUBE-SEP-BLQHCYCSXY3NRKLC {

ip saddr 192.168.0.5 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.5:443

}

chain KUBE-SVC-CG5I4G2RS3ZVWGLK {

counter packets 0 bytes 0 jump KUBE-SEP-5XVRKWM672JGTWXH

}

chain KUBE-SEP-5XVRKWM672JGTWXH {

ip saddr 192.168.0.5 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.5:80

}

chain KUBE-SVC-EZYNCFY2F7N6OQA2 {

counter packets 0 bytes 0 jump KUBE-SEP-JYW326XAJ4KK7QPG

}

chain KUBE-SEP-JYW326XAJ4KK7QPG {

ip saddr 192.168.0.5 counter packets 0 bytes 0 jump KUBE-MARK-MASQ

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.5:8443

}

}

table ip filter {

chain DOCKER {

}

chain DOCKER-ISOLATION-STAGE-1 {

iifname "docker0" oifname != "docker0" counter packets 0 bytes 0 jump DOCKER-ISOLATION-STAGE-2

counter packets 0 bytes 0 return

}

chain DOCKER-ISOLATION-STAGE-2 {

oifname "docker0" counter packets 0 bytes 0 drop

counter packets 0 bytes 0 return

}

chain FORWARD {

type filter hook forward priority filter; policy drop;

iifname "weave" counter packets 213 bytes 54014 jump WEAVE-NPC-EGRESS

oifname "weave" counter packets 150 bytes 30038 jump WEAVE-NPC

oifname "weave" ct state new counter packets 0 bytes 0 log group 86

oifname "weave" counter packets 0 bytes 0 drop

iifname "weave" oifname != "weave" counter packets 33 bytes 2941 accept

oifname "weave" ct state related,established counter packets 0 bytes 0 accept

counter packets 0 bytes 0 jump KUBE-FORWARD

ct state new counter packets 0 bytes 0 jump KUBE-SERVICES

ct state new counter packets 0 bytes 0 jump KUBE-EXTERNAL-SERVICES

counter packets 0 bytes 0 jump DOCKER-USER

counter packets 0 bytes 0 jump DOCKER-ISOLATION-STAGE-1

oifname "docker0" ct state related,established counter packets 0 bytes 0 accept

oifname "docker0" counter packets 0 bytes 0 jump DOCKER

iifname "docker0" oifname != "docker0" counter packets 0 bytes 0 accept

iifname "docker0" oifname "docker0" counter packets 0 bytes 0 accept

}

chain DOCKER-USER {

counter packets 0 bytes 0 return

}

chain KUBE-FIREWALL {

mark and 0x8000 == 0x8000 counter packets 0 bytes 0 drop

ip saddr != 127.0.0.0/8 ip daddr 127.0.0.0/8 ct status dnat counter packets 0 bytes 0 drop

}

chain OUTPUT {

type filter hook output priority filter; policy accept;

ct state new counter packets 527014 bytes 31628984 jump KUBE-SERVICES

counter packets 36324809 bytes 6021214027 jump KUBE-FIREWALL

meta l4proto != esp mark and 0x20000 == 0x20000 counter packets 0 bytes 0 drop

}

chain INPUT {

type filter hook input priority filter; policy accept;

counter packets 35869492 bytes 5971008896 jump KUBE-NODEPORTS

ct state new counter packets 390938 bytes 23457377 jump KUBE-EXTERNAL-SERVICES

counter packets 36249774 bytes 6030068622 jump KUBE-FIREWALL

meta l4proto tcp ip daddr 127.0.0.1 tcp dport 6784 fib saddr type != local ct state != related,established counter packets 0 bytes 0 drop

iifname "weave" counter packets 907273 bytes 88697229 jump WEAVE-NPC-EGRESS

counter packets 34809601 bytes 5818213726 jump WEAVE-IPSEC-IN

}

chain KUBE-KUBELET-CANARY {

}

chain KUBE-PROXY-CANARY {

}

chain KUBE-EXTERNAL-SERVICES {

}

chain KUBE-NODEPORTS {

meta l4proto tcp tcp dport 32196 counter packets 0 bytes 0 accept

meta l4proto tcp tcp dport 32196 counter packets 0 bytes 0 accept

}

chain KUBE-SERVICES {

}

chain KUBE-FORWARD {

ct state invalid counter packets 0 bytes 0 drop

mark and 0x4000 == 0x4000 counter packets 0 bytes 0 accept

ct state related,established counter packets 0 bytes 0 accept

ct state related,established counter packets 0 bytes 0 accept

}

chain WEAVE-NPC-INGRESS {

}

chain WEAVE-NPC-DEFAULT {

# match-set weave-;rGqyMIl1HN^cfDki~Z$3]6!N dst counter packets 14 bytes 840 accept

# match-set weave-P.B|!ZhkAr5q=XZ?3}tMBA+0 dst counter packets 0 bytes 0 accept

# match-set weave-Rzff}h:=]JaaJl/G;(XJpGjZ[ dst counter packets 0 bytes 0 accept

# match-set weave-]B*(W?)t*z5O17G044[gUo#$l dst counter packets 0 bytes 0 accept

# match-set weave-iLgO^}{o=U/*%KE[@=W:l~|9T dst counter packets 9 bytes 540 accept

}

chain WEAVE-NPC {

ct state related,established counter packets 124 bytes 28478 accept

ip daddr 224.0.0.0/4 counter packets 0 bytes 0 accept

# PHYSDEV match --physdev-out vethwe-bridge --physdev-is-bridged counter packets 3 bytes 180 accept

ct state new counter packets 23 bytes 1380 jump WEAVE-NPC-DEFAULT

ct state new counter packets 0 bytes 0 jump WEAVE-NPC-INGRESS

}

chain WEAVE-NPC-EGRESS-ACCEPT {

counter packets 48 bytes 3769 meta mark set mark or 0x40000

}

chain WEAVE-NPC-EGRESS-CUSTOM {

}

chain WEAVE-NPC-EGRESS-DEFAULT {

# match-set weave-s_+ChJId4Uy_$}G;WdH|~TK)I src counter packets 0 bytes 0 jump WEAVE-NPC-EGRESS-ACCEPT

# match-set weave-s_+ChJId4Uy_$}G;WdH|~TK)I src counter packets 0 bytes 0 return

# match-set weave-E1ney4o[ojNrLk.6rOHi;7MPE src counter packets 31 bytes 2749 jump WEAVE-NPC-EGRESS-ACCEPT

# match-set weave-E1ney4o[ojNrLk.6rOHi;7MPE src counter packets 31 bytes 2749 return

# match-set weave-41s)5vQ^o/xWGz6a20N:~?#|E src counter packets 0 bytes 0 jump WEAVE-NPC-EGRESS-ACCEPT

# match-set weave-41s)5vQ^o/xWGz6a20N:~?#|E src counter packets 0 bytes 0 return

# match-set weave-sui%__gZ}{kX~oZgI_Ttqp=Dp src counter packets 0 bytes 0 jump WEAVE-NPC-EGRESS-ACCEPT

# match-set weave-sui%__gZ}{kX~oZgI_Ttqp=Dp src counter packets 0 bytes 0 return

# match-set weave-nmMUaDKV*YkQcP5s?Q[R54Ep3 src counter packets 17 bytes 1020 jump WEAVE-NPC-EGRESS-ACCEPT

# match-set weave-nmMUaDKV*YkQcP5s?Q[R54Ep3 src counter packets 17 bytes 1020 return

}

chain WEAVE-NPC-EGRESS {

ct state related,established counter packets 907425 bytes 88746642 accept

# PHYSDEV match --physdev-in vethwe-bridge --physdev-is-bridged counter packets 0 bytes 0 return

fib daddr type local counter packets 11 bytes 640 return

ip daddr 224.0.0.0/4 counter packets 0 bytes 0 return

ct state new counter packets 50 bytes 3961 jump WEAVE-NPC-EGRESS-DEFAULT

ct state new mark and 0x40000 != 0x40000 counter packets 2 bytes 192 jump WEAVE-NPC-EGRESS-CUSTOM

}

chain WEAVE-IPSEC-IN {

}

chain WEAVE-CANARY {

}

}

table ip mangle {

chain KUBE-KUBELET-CANARY {

}

chain PREROUTING {

type filter hook prerouting priority mangle; policy accept;

}

chain INPUT {

type filter hook input priority mangle; policy accept;

counter packets 35716863 bytes 5906910315 jump WEAVE-IPSEC-IN

}

chain FORWARD {

type filter hook forward priority mangle; policy accept;

}

chain OUTPUT {

type route hook output priority mangle; policy accept;

counter packets 35804064 bytes 5938944956 jump WEAVE-IPSEC-OUT

}

chain POSTROUTING {

type filter hook postrouting priority mangle; policy accept;

}

chain KUBE-PROXY-CANARY {

}

chain WEAVE-IPSEC-IN {

}

chain WEAVE-IPSEC-IN-MARK {

counter packets 0 bytes 0 meta mark set mark or 0x20000

}

chain WEAVE-IPSEC-OUT {

}

chain WEAVE-IPSEC-OUT-MARK {

counter packets 0 bytes 0 meta mark set mark or 0x20000

}

chain WEAVE-CANARY {

}

}

Wow. That’s a lot of nftables entries, but it explains what’s going on. We have a nat entry for:

meta l4proto tcp ip daddr 10.107.66.138 tcp dport 8080 counter packets 1 bytes 60 jump KUBE-SVC-666FUMINWJLRRQPD

which ends up going to KUBE-SEP-LYLDBZYLHY4MT3AQ and:

meta l4proto tcp counter packets 1 bytes 60 dnat to 192.168.0.4:8080

So packets headed for our echoserver are eventually ending up in a container that has a local IP address of 192.168.0.4. Which we can see in our routing table via the weave interface. Mystery explained. We can see the ingress for the externally visible HTTP service as well:

meta l4proto tcp ip daddr 192.168.33.147 tcp dport 80 counter packets 0 bytes 0 jump KUBE-XLB-CG5I4G2RS3ZVWGLK

which ends up redirected to:

meta l4proto tcp counter packets 0 bytes 0 dnat to 192.168.0.5:80

So from that we’d expect the IP inside the echoserver pod to be 192.168.0.4 and the IP address instead our nginx ingress pod to be 192.168.0.5. Let’s look:

root@udon:/# docker ps | grep echoserver

7cbb177bee18 k8s.gcr.io/echoserver "/usr/local/bin/run.…" 3 days ago Up 3 days k8s_echoserver_hello-node-59bffcc9fd-8hkgb_default_c7111c9e-7131-40e0-876d-be89d5ca1812_0

root@udon:/# docker exec -it 7cbb177bee18 /bin/bash

root@hello-node-59bffcc9fd-8hkgb:/# awk '/32 host/ { print f } {f=$2}' <<< "$(</proc/net/fib_trie)" | sort -u

127.0.0.1

192.168.0.4

It’s a slightly awkward method of determining the local IPs addresses due to the stripped down nature of the container, but it clearly shows the expected 192.168.0.4 address.

I’ve touched here upon the ability to actually enter a container and have a poke around its running environment by using docker directly. Next step is to use that to investigate what containers have actually been spun up and what they’re doing. I’ll also revisit networking when I get to the point of building a multi-node cluster, to examine how the bridging between different hosts is done.

subscribe via RSS