Repurposing my C.H.I.P.

Way back at DebConf16 Gunnar managed to arrange for a number of Next Thing Co. C.H.I.P. boards to be distributed to those who were interested. I was lucky enough to be amongst those who received one, but I have to confess after some initial experimentation it ended up sitting in its box unused.

The reasons for that were varied; partly about not being quite sure what best to do with it, partly due to a number of limitations it had, partly because NTC sadly went insolvent and there was less momentum around the hardware. I’ve always meant to go back to it, poking it every now and then but never completing a project. I’m finally almost there, and I figure I should write some of it up.

TL;DR: My C.H.I.P. is currently running a mainline Linux 6.3 kernel with only a few DTS patches, an upstream u-boot v2022.1 with a couple of minor patches and an unmodified Debian bullseye armhf userspace.

Storage

The main issue with the C.H.I.P. is that it uses MLC NAND, in particular mine has an 8MB H27QCG8T2E5R. That ended up unsupported in Linux, with the UBIFS folk disallowing operation on MLC devices. There’s been subsequent work to enable an “SLC emulation” mode which makes the device more reliable at the cost of losing capacity by pairing up writes/reads in cells (AFAICT). Some of this hit for the H27UCG8T2ETR in 5.16 kernels, but I definitely did some experimentation with 5.17 without having much success. I should maybe go back and try again, but I ended up going a different route.

It turned out that BytePorter had documented how to add a microSD slot to the NTC C.H.I.P., using just a microSD to full SD card adapter. Every microSD card I buy seems to come with one of these, so I had plenty lying around to test with. I started with ensuring the kernel could see it ok (by modifying the device tree), but once that was all confirmed I went further and built a more modern u-boot that talked to the SD card, and defaulted to booting off it. That meant no more relying on the internal NAND at all!

I do see some flakiness with the SD card, which is possibly down to the dodgy way it’s hooked up (I should probably do a basic PCB layout with JLCPCB instead). That’s mostly been mitigated by forcing it into 1-bit mode instead of 4-bit mode (I tried lowering the frequency too, but that didn’t make a difference).

The problem manifests as:

sunxi-mmc 1c11000.mmc: data error, sending stop command

and then all storage access freezing (existing logins still work, if the program you’re trying to run is in cache). I can’t find a conclusive software solution to this; I’m pretty sure it’s the hardware, but I don’t understand why the recovery doesn’t generally work.

Random power offs

After I had storage working I’d see random hangs or power offs. It wasn’t quite clear what was going on. So I started trying to work out how to find out the CPU temperature, in case it was overheating. It turns out the temperature sensor on the R8 is part of the touchscreen driver, and I’d taken my usual approach of turning off all the drivers I didn’t think I’d need. Enabling it (CONFIG_TOUCHSCREEN_SUN4I) gave temperature readings and seemed to help somewhat with stability, though not completely.

Next I ended up looking at the AXP209 PMIC. There were various scripts still installed (I’d started out with the NTC Debian install and slowly upgraded it to bullseye while stripping away the obvious pieces I didn’t need) and a start-up script called enable-no-limit. This turned out to not be running (some sort of expectation of i2c-dev being loaded and another failing check), but looking at the script and the data sheet revealed the issue.

The AXP209 can cope with 3 power sources; an external DC source, a Li-battery, and finally a USB port. I was powering my board via the USB port, using a charger rated for 2A. It turns out that the AXP209 defaults to limiting USB current to 900mA, and that with wifi active and the CPU busy the C.H.I.P. can rise above that. At which point the AXP shuts everything down. Armed with that info I was able to understand what the power scripts were doing and which bit I needed - i2cset -f -y 0 0x34 0x30 0x03 to set no limit and disable the auto-power off. Additionally I also discovered that the AXP209 had a built in temperature sensor as well, so I added support for that via iio-hwmon.

WiFi

WiFi on the C.H.I.P. is provided by an RTL8723BS SDIO attached device. It’s terrible (and not just here, I had an x86 based device with one where it also sucked). Thankfully there’s a driver in staging in the kernel these days, but I’ve still found it can fall out with my house setup, end up connecting to a further away AP which then results in lots of retries, dropped frames and CPU consumption. Nailing it to the AP on the other side of the wall from where it is helps. I haven’t done any serious testing with the Bluetooth other than checking it’s detected and can scan ok.

Patches

I patched u-boot v2022.01 (which shows you how long ago I was trying this out) with the following to enable boot from external SD:

u-boot C.H.I.P. external SD patch

diff --git a/arch/arm/dts/sun5i-r8-chip.dts b/arch/arm/dts/sun5i-r8-chip.dts

index 879a4b0f3b..1cb3a754d6 100644

--- a/arch/arm/dts/sun5i-r8-chip.dts

+++ b/arch/arm/dts/sun5i-r8-chip.dts

@@ -84,6 +84,13 @@

reset-gpios = <&pio 2 19 GPIO_ACTIVE_LOW>; /* PC19 */

};

+ mmc2_pins_e: mmc2@0 {

+ pins = "PE4", "PE5", "PE6", "PE7", "PE8", "PE9";

+ function = "mmc2";

+ drive-strength = <30>;

+ bias-pull-up;

+ };

+

onewire {

compatible = "w1-gpio";

gpios = <&pio 3 2 GPIO_ACTIVE_HIGH>; /* PD2 */

@@ -175,6 +182,16 @@

status = "okay";

};

+&mmc2 {

+ pinctrl-names = "default";

+ pinctrl-0 = <&mmc2_pins_e>;

+ vmmc-supply = <®_vcc3v3>;

+ vqmmc-supply = <®_vcc3v3>;

+ bus-width = <4>;

+ broken-cd;

+ status = "okay";

+};

+

&ohci0 {

status = "okay";

};

diff --git a/arch/arm/include/asm/arch-sunxi/gpio.h b/arch/arm/include/asm/arch-sunxi/gpio.h

index f3ab1aea0e..c0dfd85a6c 100644

--- a/arch/arm/include/asm/arch-sunxi/gpio.h

+++ b/arch/arm/include/asm/arch-sunxi/gpio.h

@@ -167,6 +167,7 @@ enum sunxi_gpio_number {

#define SUN8I_GPE_TWI2 3

#define SUN50I_GPE_TWI2 3

+#define SUNXI_GPE_SDC2 4

#define SUNXI_GPF_SDC0 2

#define SUNXI_GPF_UART0 4

diff --git a/board/sunxi/board.c b/board/sunxi/board.c

index fdbcd40269..f538cb7e20 100644

--- a/board/sunxi/board.c

+++ b/board/sunxi/board.c

@@ -433,9 +433,9 @@ static void mmc_pinmux_setup(int sdc)

sunxi_gpio_set_drv(pin, 2);

}

#elif defined(CONFIG_MACH_SUN5I)

- /* SDC2: PC6-PC15 */

- for (pin = SUNXI_GPC(6); pin <= SUNXI_GPC(15); pin++) {

- sunxi_gpio_set_cfgpin(pin, SUNXI_GPC_SDC2);

+ /* SDC2: PE4-PE9 */

+ for (pin = SUNXI_GPE(4); pin <= SUNXI_GPE(9); pin++) {

+ sunxi_gpio_set_cfgpin(pin, SUNXI_GPE_SDC2);

sunxi_gpio_set_pull(pin, SUNXI_GPIO_PULL_UP);

sunxi_gpio_set_drv(pin, 2);

}

I’ve sent some patches for the kernel device tree upstream - there’s an outstanding issue with the Bluetooth wake GPIO causing the serial port not to probe(!) that I need to resolve before sending a v2, but what’s there works for me.

The only remaining piece is patch to enable the external SD for Linux; I don’t think it’s appropriate to send upstream but it’s fairly basic. This limits the bus to 1 bit rather than the 4 bits it’s capable of, as mentioned above.

Linux C.H.I.P. external SD DTS patch

```diff diff --git a/arch/arm/boot/dts/sun5i-r8-chip.dts b/arch/arm/boot/dts/sun5i-r8-chip.dts index fd37bd1f3920..2b5aa4952620 100644 --- a/arch/arm/boot/dts/sun5i-r8-chip.dts +++ b/arch/arm/boot/dts/sun5i-r8-chip.dts @@ -163,6 +163,17 @@ &mmc0 { status = "okay"; }; +&mmc2 { + pinctrl-names = "default"; + pinctrl-0 = <&mmc2_4bit_pe_pins>; + vmmc-supply = <®_vcc3v3>; + vqmmc-supply = <®_vcc3v3>; + bus-width = <1>; + non-removable; + disable-wp; + status = "okay"; +}; + &ohci0 { status = "okay"; }; ```

As for what I’m doing with it, I think that’ll have to be a separate post.

Buttering up my storage

(TL;DR: I’ve been trying out btrfs in some places instead of ext4, I’ve hit absolutely zero issues and there are a few features that make me plan to use it more.)

Despite (or perhaps because of) working on storage products for a reasonable chunk of my career I have tended towards a conservative approach to my filesystems. By the time I came to Linux ext2 was well established, the move to ext3 was a logical one (the joys of added journalling for faster recovery after unclean shutdowns) and for a long time my default stack has been MD raid with LVM2 on top and then ext4 as the filesystem.

I’ve dabbled with other filesystems; I ran XFS for a while on my VDR machine, and also when I had a large tradspool with INN, but never really had a hard requirement for it. I’ve ended up adminning a machine that had JFS in the past, largely for historical reasons, but don’t really remember any issues (vague recollections of NFS problems but that might just have been NFS being NFS).

However. ZFS has gathered itself a significant fan base and that makes me wonder about what it can offer and whether I want that. Firstly, let’s be clear that I’m never going to run a primary filesystem that isn’t part of the mainline kernel. So ZFS itself is out, because I run Linux. So what do I want that I can’t get with ext4? Firstly, I’d like data checksumming. As storage gets larger there’s a bigger chance of silent data corruption and while I have backups of the important stuff that doesn’t help if you don’t know you need to use them. Secondly, these days I have machines running containers, VMs, or with lots of source checkouts with a reasonable amount of overlap in their data. Disk space has got cheaper, but I’d still like to be able to do some sort of deduplication of common blocks.

So, I’ve been trying out btrfs. When I installed my desktop I went with btrfs for / and /home (I kept /boot as ext4). The thought process was that this was a local machine (so easy access if it all went wrong) and I take regular backups (so if it all went wrong I could recover). That was a year and a half ago and it’s been pretty dull; I mostly forget I’m running btrfs instead of ext4. This is on a machine that tracks Debian testing, so currently on kernel 6.1 but originally installed with 5.10. So it seems modern btrfs is reasonably stable for a machine that isn’t driven especially hard. Good start.

The fact I forget what filesystem I’m running points to the fact that I’m not actually doing anything special here. I get the advantage of data checksumming, but not much else. 2 things spring to mind. Firstly, I don’t do snapshots. Given I run testing it might be wiser if I did take a snapshot before every apt-get upgrade, and I have a friend who does just that, but even when I’ve run unstable I’ve never had a machine get itself into a state that I couldn’t recover so I haven’t spent time investigating. I note Ubuntu has apt-btrfs-snapshot but it doesn’t seem to have any updates for years.

The other thing I didn’t do when I installed my desktop is take advantage of subvolumes. I’m still trying to get my head around exactly what I want them for, but they provide a partial replacement for LVM when it comes to carving up disk space. Instead of the separate / and /home LVs I created I could have created a single LV that would have a single btrfs filesystem on it. / and /home would then be separate subvolumes, allowing me to snapshot each individually. Quotas can also be applied separately so there’s still the potential to prevent one subvolume taking all available space.

Encouraged by the lack of hassle with my desktop I decided to try moving my sbuild machine over to use btrfs for its build chroots. For Reasons this is a VM kindly hosted by a friend, rather than something local. To be honest these days I would probably go for local hosting, but it works and there’s no strong reason to move. The point is it’s remote, and so if migrating went wrong and I had to ask for assistance I’d be bothering someone who’s doing me a favour as it is.

The build VM is, of course, running LVM, and there was luckily some free space available. I’m reasonably sure the underlying storage involves spinning rust, so I did a laborious set of pvmove commands to make sure all the available space was at the start of the PV, and created a new btrfs volume there. I was advised that while btrfs-convert would do the job it was better to create a fresh filesystem where possible. This time I did create an initial root subvolume.

Configuring up sbuild was then much simpler than I’d expected. My setup originally started out as a set of tarballs for the chroots that would get untarred + used for the builds, which is pretty slow. Once overlayfs was mature enough I switched to that. I’d had a conversation with Enrico about his nspawn/btrfs setup, but it turned out Russ Allbery had written an excellent set of instructions on sbuild with btrfs. I tweaked my existing setup based on his details, and I was in business. Each chroot is a separate subvolume - I don’t actually end up having to mount them individually, but it means that only the chroot in use gets snapshotted. For example during a build the following can be observed:

# btrfs subvolume list /

ID 257 gen 111534 top level 5 path root

ID 271 gen 111525 top level 257 path srv/chroot/unstable-amd64-sbuild

ID 275 gen 27873 top level 257 path srv/chroot/bullseye-amd64-sbuild

ID 276 gen 27873 top level 257 path srv/chroot/buster-amd64-sbuild

ID 343 gen 111533 top level 257 path srv/chroot/snapshots/unstable-amd64-sbuild-328059a0-e74b-4d9f-be70-24b59ccba121

I was a little confused about whether I’d got something wrong because the snapshot top level is listed as 257 rather than 271, but digging further with btrfs subvolume show on the 2 mounted directories correctly showed the snapshot had a parent equal to the chroot, not /.

As a final step I ran jdupes via jdupes -1Br / to deduplicate things across the filesystem. It didn’t end up providing a significant saving unfortunately - I guess there’s a reasonable amount of change between Debian releases - but I think tried it on my desktop, which tends to have a large number of similar source trees checked out. There I managed to save about 5% on /home, which didn’t seem too shabby.

The sbuild setup has been in place for a couple of months now, and I’ve run quite a few builds on it while preparing for the freeze. So I’m fairly confident in the stability of the setup and my next move is to transition my local house server over to btrfs for its containers (which all run under systemd-nspawn). Those are generally running a Debian stable base so there should be a decent amount of commonality for deduping.

I’m not saying I’m yet at the point where I’ll default to btrfs on new installs, but I’m definitely looking at it for situations where I think I can get benefits from deduplication, or being able to divide up disk space without hard partitioning space.

(And, just to answer the worry I had when I started, I’ve got nowhere near ENOSPC problems, but I believe they’re handled much more gracefully these days. And my experience of ZFS when it got above 90% utilization was far from ideal too.)

Fixing mobile viewing

It was brought to my attention recently that the mobile viewing experience of this blog was not exactly what I’d hope for. In my poor defence I proof read on my desktop and the only time I see my posts on mobile is via FreshRSS. Also my UX ability sucks.

Anyway. I’ve updated the “theme” to a more recent version of minima and tried to make sure I haven’t broken it all in the process (I did break tagging, but then I fixed it again). I double checked the generated feed to confirm it was the same (other than some re-tagging I did), so hopefully I haven’t flooded anyone’s feed.

Hopefully I can go back to ignoring the underlying blog engine for another 5+ years. If not I’ll have to take a closer look at Enrico’s staticsite.

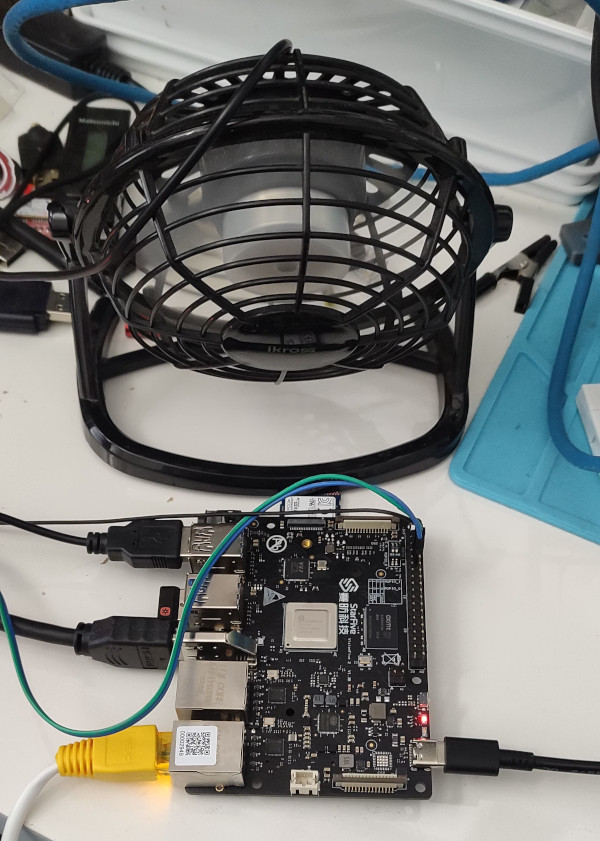

First impressions of the VisionFive 2

Back in September last year I chose to back the StarFive VisionFive 2 on Kickstarter. I don’t have a particular use in mind for it, but I felt it was one of the first RISC-V systems that were relatively capable (mentally I have it as somewhere between a Raspberry Pi 3 + a Pi 4). In particular it’s a quad 1.5GHz 64-bit RISC-V core with 8G RAM, USB3, GigE ethernet and a single M.2 PCIe slot. More than ample as a personal machine for playing around with RISC-V and doing local builds. I ended up paying £67 for the Early Bird variant (dual GigE ethernet rather than 1 x 100Mb and 1 x GigE). A couple of weeks ago I got an email with a tracking number and last week it finally turned up.

Being impatient the first thing I did was plug it into a monitor, connect up a keyboard, and power it on. Nothing except some flashing lights. Looking at the boot selector DIP switches suggested it was configured to boot from UART, so I flipped them to (what I thought was) the flash setting. It wasn’t - turns out the “ON” marking on the switches represents logic 0 and it was correctly setup when I got it. I went to read the documentation which talked about writing an image to a MicroSD card, but also had details of the UART connection. Wanting to make sure the device was at least doing something before I actually tried an OS on it I hooked up a USB/serial dongle and powered the board up again. Success! U-Boot appeared and I could interact with it.

I went to the VisionFive2 Debian page and proceeded to torrent the Image-69 image, writing it to a MicroSD card and inserting it in the slot on the bottom of the board. It booted fine. I can’t even tell you what graphical environment it booted up because I don’t remember; it worked fine though (at 1080p, I’ve seen reports that 4K screens will make it croak).

Poking around the image revealed that it’s built off a snapshot.debian.org snapshot from 20220616T194833Z, which is a little dated at this point but I understand the rationale behind picking something that works and sticking with it. The kernel is of course a vendor special, based on 5.15.0. Further investigation revealed that the entire X/graphics stack is living in /usr/local, which isn’t overly surprising; it’s Imagination based. I was pleasantly surprised to discover there is work to upstream the Imagination support, but I’m not planning to run the board with a monitor attached so it’s not a high priority for me.

Having discovered all that I decided to see how well a “clean” Debian unstable install from Debian Ports would go. I had a spare Intel Optane lying around (it’s a stupid 22110 M.2 which is too long for any machine I own), so I put it in the slot on the bottom of the board. To my surprise it Just Worked and was detected ok:

# lspci

0000:00:00.0 PCI bridge: PLDA XpressRich-AXI Ref Design (rev 02)

0000:01:00.0 USB controller: VIA Technologies, Inc. VL805/806 xHCI USB 3.0 Controller (rev 01)

0001:00:00.0 PCI bridge: PLDA XpressRich-AXI Ref Design (rev 02)

0001:01:00.0 Non-Volatile memory controller: Intel Corporation NVMe Datacenter SSD [Optane]

I created a single partition with an ext4 filesystem (initially tried btrfs, but the StarFive kernel doesn’t support it), and kicked off a debootstrap with:

# mkfs -t ext4 /dev/nvme0n1p1

# mount /dev/nvme0n1p1 /mnt

# debootstrap --keyring=/etc/apt/trusted.gpg.d/debian-ports-archive-2023.gpg \

unstable /mnt https://deb.debian.org/debian-ports

The u-boot setup has a convoluted set of vendor scripts that eventually ends up reading a /boot/extlinux/extlinux.conf config from /dev/mmcblk1p2, so I added an additional entry there using the StarFive kernel but pointing to the NVMe device for /. Made sure to set a root password (not that I’ve been bitten by that before, too many times), and rebooted. Success! Well. Sort of. I hit a bunch of problems with having a getty running on ttyS0 as well as one running on hvc0. The second turns out to be a console device from the RISC-V SBI. I did a systemctl mask serial-getty@hvc0.service which made things a bit happier, but I was still seeing odd behaviour and output. Turned out I needed to reboot the initramfs as well; the StarFive one was using Plymouth and doing some other stuff that seemed to be confusing matters. An update-initramfs -k 5.15.0-starfive -c built me a new one and everything was happy.

Next problem; the StarFive kernel doesn’t have IPv6 support. StarFive are good citizens and make their 5.15 kernel tree available, so I grabbed it, fed it the existing config, and tweaked some options (including adding IPV6 and SECCOMP, which chrony wanted). Slight hiccup when it turned out trying to do things like make sound modular caused it to fail to compile, and having to backport the fix that allowed the use of GCC 12 (as present in sid), but it got there. So I got cocky and tried to update it to the latest 5.15.94. A few manual merge fixups (which I may or may not have got right, but it compiles and boots for me), and success. Timings:

$ time make -j 4 bindeb-pkg

… [linux-image-5.15.94-00787-g1fbe8ac32aa8]

real 37m0.134s

user 117m27.392s

sys 6m49.804s

On the subject of kernels I am pleased to note that there are efforts to upstream the VisionFive 2 support, with what appears to be multiple members of StarFive engaging in multiple patch submission rounds. It’s really great to see this and I look forward to being able to run an unmodified mainline kernel on my board.

Niggles? I have a few. The provided u-boot doesn’t have NVMe support enabled, so at present I need to keep a MicroSD card to boot off, even though root is on an SSD. I’m also seeing some errors in dmesg from the SSD:

[155933.434038] nvme nvme0: I/O 436 QID 4 timeout, completion polled

[156173.351166] nvme nvme0: I/O 48 QID 3 timeout, completion polled

[156346.228993] nvme nvme0: I/O 108 QID 3 timeout, completion polled

It doesn’t seem to cause any actual issues, and it could be the SSD, the 5.15 kernel or an actual hardware thing - I’ll keep an eye on it (I will probably end up with a different SSD that actually fits, so that’ll provide another data point).

More annoying is the temperature the CPU seems to run at. There’s no heatsink or fan, just the metal heatspreader on top of the CPU, and in normal idle operation it sits at around 60°C. Compiling a kernel it hit 90°C before I stopped the job and sorted out some additional cooling in the form of a desk fan, which kept it as just over 30°C.

I haven’t seen any actual stability problems, but I wouldn’t want to run for any length of time like that. I’ve ordered a heatsink and also realised that the board supports a Raspberry Pi style PoE “Hat”, so I’ve got one of those that includes a fan ordered (I am a complete convert to PoE especially for small systems like this).

With the desk fan setup I’ve been able to run the board for extended periods under load (I did a full recompile of the Debian 6.1.12-1 kernel package and it took about 10 hours). The M.2 slot is unfortunately only a single PCIe v2 lane, and my testing topped out at about 180MB/s. IIRC that is about half what the slot should be capable of, and less than a 10th of what the SSD can do. Ethernet testing with iPerf3 sustained about 941Mb/s, so basically maxing out the port. The board as a whole isn’t going to set any speed records, but it’s perfectly usable, and pretty impressive for the price point.

On the Debian side I’ve not hit any surprises. There’s work going on to move RISC-V to a proper release architecture, and I’m hoping to be able to help out with that, but the version of unstable I installed from the ports infrastructure has looked just like any other Debian install. Which is what you want. And that pretty much sums up my overall experience of the VisionFive 2; it’s not noticeably different than any other single board computer. That’s a good thing, FWIW, and once the kernel support lands properly upstream (it’ll be post 6.3 at least it seems) it’ll be a boring mainline supported platform that just happens to be RISC-V.

Building a read-only Debian root setup: Part 2

This is the second part of how I build a read-only root setup for my router. You might want to read part 1 first, which covers the initial boot and general overview of how I tie the pieces together. This post will describe how I build the squashfs image that forms the main filesystem.

Most of the build is driven from a script, make-router, which I’ll dissect below. It’s highly tailored to my needs, and this is a fairly lengthy post, but hopefully the steps I describe prove useful to anyone trying to do something similar.

Breakdown of make-router

#!/bin/bash

# Either rb3011 (arm) or rb5009 (arm64)

#HOSTNAME="rb3011"

HOSTNAME="rb5009"

if [ "x${HOSTNAME}" == "xrb3011" ]; then

ARCH=armhf

elif [ "x${HOSTNAME}" == "xrb5009" ]; then

ARCH=arm64

else

echo "Unknown host: ${HOSTNAME}"

exit 1

fi

It’s a bash script, and I allow building for either my RB3011 or RB5009, which means a different architecture (32 vs 64 bit). I run this script on my Pi 4 which means I don’t have to mess about with QemuUserEmulation.

BASE_DIR=$(dirname $0)

IMAGE_FILE=$(mktemp --tmpdir router.${ARCH}.XXXXXXXXXX.img)

MOUNT_POINT=$(mktemp -p /mnt -d router.${ARCH}.XXXXXXXXXX)

# Build and mount an ext4 image file to put the root file system in

dd if=/dev/zero bs=1 count=0 seek=1G of=${IMAGE_FILE}

mkfs -t ext4 ${IMAGE_FILE}

mount -o loop ${IMAGE_FILE} ${MOUNT_POINT}

I build the image in a loopback ext4 file on tmpfs (my Pi4 is the 8G model), which makes things a bit faster.

# Add dpkg excludes

mkdir -p ${MOUNT_POINT}/etc/dpkg/dpkg.cfg.d/

cat <<EOF > ${MOUNT_POINT}/etc/dpkg/dpkg.cfg.d/path-excludes

# Exclude docs

path-exclude=/usr/share/doc/*

# Only locale we want is English

path-exclude=/usr/share/locale/*

path-include=/usr/share/locale/en*/*

path-include=/usr/share/locale/locale.alias

# No man pages

path-exclude=/usr/share/man/*

EOF

Create a dpkg excludes config to drop docs, man pages and most locales before we even start the bootstrap.

# Setup fstab + mtab

echo "# Empty fstab as root is pre-mounted" > ${MOUNT_POINT}/etc/fstab

ln -s ../proc/self/mounts ${MOUNT_POINT}/etc/mtab

# Setup hostname

echo ${HOSTNAME} > ${MOUNT_POINT}/etc/hostname

# Add the root SSH keys

mkdir -p ${MOUNT_POINT}/root/.ssh/

cat <<EOF > ${MOUNT_POINT}/root/.ssh/authorized_keys

ssh-rsa AAAAB3NzaC1yc2EAAAABIwAAAQEAv8NkUeVdsVdegS+JT9qwFwiHEgcC9sBwnv6RjpH6I4d3im4LOaPOatzneMTZlH8Gird+H4nzluciBr63hxmcFjZVW7dl6mxlNX2t/wKvV0loxtEmHMoI7VMCnrWD0PyvwJ8qqNu9cANoYriZRhRCsBi27qPNvI741zEpXN8QQs7D3sfe4GSft9yQplfJkSldN+2qJHvd0AHKxRdD+XTxv1Ot26+ZoF3MJ9MqtK+FS+fD9/ESLxMlOpHD7ltvCRol3u7YoaUo2HJ+u31l0uwPZTqkPNS9fkmeCYEE0oXlwvUTLIbMnLbc7NKiLgniG8XaT0RYHtOnoc2l2UnTvH5qsQ== noodles@earth.li

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAACAQDQb9+qFemcwKhey3+eTh5lxp+3sgZXW2HQQEZMt9hPvVXk+MiiNMx9WUzxPJnwXqlmmVdKsq+AvjA0i505Pp8fIj5DdUBpSqpLghmzpnGuob7SSwXYj+352hjD52UC4S0KMKbIaUpklADgsCbtzhYYc4WoO8F7kK63tS5qa1XSZwwRwPbYOWBcNocfr9oXCVWD9ismO8Y0l75G6EyW8UmwYAohDaV83pvJxQerYyYXBGZGY8FNjqVoOGMRBTUcLj/QTo0CDQvMtsEoWeCd0xKLZ3gjiH3UrknkaPra557/TWymQ8Oh15aPFTr5FvKgAlmZaaM0tP71SOGmx7GpCsP4jZD1Xj/7QMTAkLXb+Ou6yUOVM9J4qebdnmF2RGbf1bwo7xSIX6gAYaYgdnppuxqZX1wyAy+A2Hie4tUjMHKJ6OoFwBsV1sl+3FobrPn6IuulRCzsq2aLqLey+PHxuNAYdSKo7nIDB3qCCPwHlDK52WooSuuMidX4ujTUw7LDTia9FxAawudblxbrvfTbg3DsiDBAOAIdBV37HOAKu3VmvYSPyqT80DEy8KFmUpCEau59DID9VERkG6PWPVMiQnqgW2Agn1miOBZeIQV8PFjenAySxjzrNfb4VY/i/kK9nIhXn92CAu4nl6D+VUlw+IpQ8PZlWlvVxAtLonpjxr9OTw== noodles@yubikey

ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQC0I8UHj4IpfqUcGE4cTvLB0d2xmATSUzqtxW6ZhGbZxvQDKJesVW6HunrJ4NFTQuQJYgOXY/o82qBpkEKqaJMEFHTCjcaj3M6DIaxpiRfQfs0nhtzDB6zPiZn9Suxb0s5Qr4sTWd6iI9da72z3hp9QHNAu4vpa4MSNE+al3UfUisUf4l8TaBYKwQcduCE0z2n2FTi3QzmlkOgH4MgyqBBEaqx1tq7Zcln0P0TYZXFtrxVyoqBBIoIEqYxmFIQP887W50wQka95dBGqjtV+d8IbrQ4pB55qTxMd91L+F8n8A6nhQe7DckjS0Xdla52b9RXNXoobhtvx9K2prisagsHT noodles@cup

ecdsa-sha2-nistp256 AAAAE2VjZHNhLXNoYTItbmlzdHAyNTYAAAAIbmlzdHAyNTYAAABBBK6iGog3WbNhrmrkglNjVO8/B6m7mN6q1tMm1sXjLxQa+F86ETTLiXNeFQVKCHYrk8f7hK0d2uxwgj6Ixy9k0Cw= noodles@sevai

EOF

Setup fstab, the hostname and SSH keys for root.

# Bootstrap our install

debootstrap \

--arch=${ARCH} \

--include=collectd-core,conntrack,dnsmasq,ethtool,iperf3,kexec-tools,mosquitto,mtd-utils,mtr-tiny,ppp,tcpdump,rng-tools5,ssh,watchdog,wget \

--exclude=dmidecode,isc-dhcp-client,isc-dhcp-common,makedev,nano \

bullseye ${MOUNT_POINT} https://deb.debian.org/debian/

Actually do the debootstrap step, including a bunch of extra packages that we want.

# Install mqtt-arp

cp ${BASE_DIR}/debs/mqtt-arp_1_${ARCH}.deb ${MOUNT_POINT}/tmp

chroot ${MOUNT_POINT} dpkg -i /tmp/mqtt-arp_1_${ARCH}.deb

rm ${MOUNT_POINT}/tmp/mqtt-arp_1_${ARCH}.deb

# Frob the mqtt-arp config so it starts after mosquitto

sed -i -e 's/After=.*/After=mosquitto.service/' ${MOUNT_POINT}/lib/systemd/system/mqtt-arp.service

I haven’t uploaded mqtt-arp to Debian, so I install a locally built package, and ensure it starts after mosquitto (the MQTT broker), given they’re running on the same host.

# Frob watchdog so it starts earlier than multi-user

sed -i -e 's/After=.*/After=basic.target/' ${MOUNT_POINT}/lib/systemd/system/watchdog.service

# Make sure the watchdog is poking the device file

sed -i -e 's/^#watchdog-device/watchdog-device/' ${MOUNT_POINT}/etc/watchdog.conf

watchdog timeouts were particularly an issue on the RB3011, where the default timeout didn’t give enough time to reach multiuser mode before it would reset the router. Not helpful, so alter the config to start it earlier (and make sure it’s configured to actually kick the device file).

# Clean up docs + locales

rm -r ${MOUNT_POINT}/usr/share/doc/*

rm -r ${MOUNT_POINT}/usr/share/man/*

for dir in ${MOUNT_POINT}/usr/share/locale/*/; do

if [ "${dir}" != "${MOUNT_POINT}/usr/share/locale/en/" ]; then

rm -r ${dir}

fi

done

Clean up any docs etc that ended up installed.

# Set root password to root

echo "root:root" | chroot ${MOUNT_POINT} chpasswd

The only login method is ssh key to the root account though I suppose this allows for someone to execute a privilege escalation from a daemon user so I should probably randomise this. Does need to be known though so it’s possible to login via the serial console for debugging.

# Add security to sources.list + update

echo "deb https://security.debian.org/debian-security bullseye-security main" >> ${MOUNT_POINT}/etc/apt/sources.list

chroot ${MOUNT_POINT} apt update

chroot ${MOUNT_POINT} apt -y full-upgrade

chroot ${MOUNT_POINT} apt clean

# Cleanup the APT lists

rm ${MOUNT_POINT}/var/lib/apt/lists/www.*

rm ${MOUNT_POINT}/var/lib/apt/lists/security.*

Pull in any security updates, then clean out the APT lists rather than polluting the image with them.

# Disable the daily APT timer

rm ${MOUNT_POINT}/etc/systemd/system/timers.target.wants/apt-daily.timer

# Disable daily dpkg backup

cat <<EOF > ${MOUNT_POINT}/etc/cron.daily/dpkg

#!/bin/sh

# Don't do the daily dpkg backup

exit 0

EOF

# We don't want a persistent systemd journal

rmdir ${MOUNT_POINT}/var/log/journal

None of these make sense on a router.

# Enable nftables

ln -s /lib/systemd/system/nftables.service \

${MOUNT_POINT}/etc/systemd/system/sysinit.target.wants/nftables.service

Ensure we have firewalling enabled automatically.

# Add systemd-coredump + systemd-timesync user / group

echo "systemd-timesync:x:998:" >> ${MOUNT_POINT}/etc/group

echo "systemd-coredump:x:999:" >> ${MOUNT_POINT}/etc/group

echo "systemd-timesync:!*::" >> ${MOUNT_POINT}/etc/gshadow

echo "systemd-coredump:!*::" >> ${MOUNT_POINT}/etc/gshadow

echo "systemd-timesync:x:998:998:systemd Time Synchronization:/:/usr/sbin/nologin" >> ${MOUNT_POINT}/etc/passwd

echo "systemd-coredump:x:999:999:systemd Core Dumper:/:/usr/sbin/nologin" >> ${MOUNT_POINT}/etc/passwd

echo "systemd-timesync:!*:47358::::::" >> ${MOUNT_POINT}/etc/shadow

echo "systemd-coredump:!*:47358::::::" >> ${MOUNT_POINT}/etc/shadow

# Create /etc/.pwd.lock, otherwise it'll end up in the overlay

touch ${MOUNT_POINT}/etc/.pwd.lock

chmod 600 ${MOUNT_POINT}/etc/.pwd.lock

Create a number of users that will otherwise get created at boot, and a lock file that will otherwise get created anyway.

# Copy config files

cp --recursive --preserve=mode,timestamps ${BASE_DIR}/etc/* ${MOUNT_POINT}/etc/

cp --recursive --preserve=mode,timestamps ${BASE_DIR}/etc-${ARCH}/* ${MOUNT_POINT}/etc/

chroot ${MOUNT_POINT} chown mosquitto /etc/mosquitto/mosquitto.users

chroot ${MOUNT_POINT} chown mosquitto /etc/ssl/mqtt.home.key

There are config files that are easier to replace wholesale, some of which are specific to the hardware (e.g. related to network interfaces). See below for some more details.

# Build symlinks into flash for boot / modules

ln -s /mnt/flash/lib/modules ${MOUNT_POINT}/lib/modules

rmdir ${MOUNT_POINT}/boot

ln -s /mnt/flash/boot ${MOUNT_POINT}/boot

The kernel + its modules live outside the squashfs image, on the USB flash drive that the image lives on. That makes for easier kernel upgrades.

# Put our git revision into os-release

echo -n "GIT_VERSION=" >> ${MOUNT_POINT}/etc/os-release

(cd ${BASE_DIR} ; git describe --tags) >> ${MOUNT_POINT}/etc/os-release

Always helpful to be able to check the image itself for what it was built from.

# Add some stuff to root's .bashrc

cat << EOF >> ${MOUNT_POINT}/root/.bashrc

alias ls='ls -F --color=auto'

eval "\$(dircolors)"

case "\$TERM" in

xterm*|rxvt*)

PS1="\\[\\e]0;\\u@\\h: \\w\a\\]\$PS1"

;;

*)

;;

esac

EOF

Just some niceties for when I do end up logging in.

# Build the squashfs

mksquashfs ${MOUNT_POINT} /tmp/router.${ARCH}.squashfs \

-comp xz

Actually build the squashfs image.

# Save the installed package list off

chroot ${MOUNT_POINT} dpkg --get-selections > /tmp/wip-installed-packages

Save off the installed package list. This was particularly useful when trying to replicate the existing router setup and making sure I had all the important packages installed. It doesn’t really serve a purpose now.

In terms of the config files I copy into /etc, shared across both routers are the following:

Breakdown of shared config

- apt config (disable recommends, periodic updates):

apt/apt.conf.d/10periodic,apt/apt.conf.d/local-recommends

- Adding a default, empty, locale:

default/locale

- DNS/DHCP:

dnsmasq.conf,dnsmasq.d/dhcp-ranges,dnsmasq.d/static-ipshosts,resolv.conf

- Enabling IP forwarding:

sysctl.conf

- Logs related:

logrotate.conf,rsyslog.conf

- MQTT related:

mosquitto/mosquitto.users,mosquitto/conf.d/ssl.conf,mosquitto/conf.d/users.conf,mosquitto/mosquitto.acl,mosquitto/mosquitto.confmqtt-arp.confssl/lets-encrypt-r3.crt,ssl/mqtt.home.key,ssl/mqtt.home.crt

- PPP configuration:

ppp/ip-up.d/0000usepeerdns,ppp/ipv6-up.d/defaultroute,ppp/pap-secrets,ppp/chap-secretsnetwork/interfaces.d/pppoe-wan

The router specific config is mostly related to networking:

Breakdown of router specific config

- Firewalling:

nftables.conf

- Interfaces:

dnsmasq.d/interfacesnetwork/interfaces.d/eth0,network/interfaces.d/p1,network/interfaces.d/p2,network/interfaces.d/p7,network/interfaces.d/p8

- PPP config (network interface piece):

ppp/peers/aquiss

- SSH keys:

ssh/ssh_host_ecdsa_key,ssh/ssh_host_ed25519_key,ssh/ssh_host_rsa_key,ssh/ssh_host_ecdsa_key.pub,ssh/ssh_host_ed25519_key.pub,ssh/ssh_host_rsa_key.pub

- Monitoring:

collectd/collectd.conf,collectd/collectd.conf.d/network.conf

subscribe via RSS